[!TIP] Ongoing and occasional updates and improvements.

openshift 4.15 multi-network policy with ovn on 2nd network

Our customers share a common requirement: using OpenShift CNV as a pure virtual machine operation and management platform. They want to deploy VMs on CNV where the VMs’ network remains completely separate from the container platform’s network. In essence, each VM should have a single network interface card connected to the external network. Concurrently, OpenShift should offer flexible control over inbound and outbound traffic on its platform level to ensure security.

Currently, OpenShift allows the creation of a secondary network plane. On this plane, users can create overlay or underlay networks, and importantly, craft network policies using NetworkPolicy resources.

Here, we will demonstrate this by creating a secondary OVN network plane.

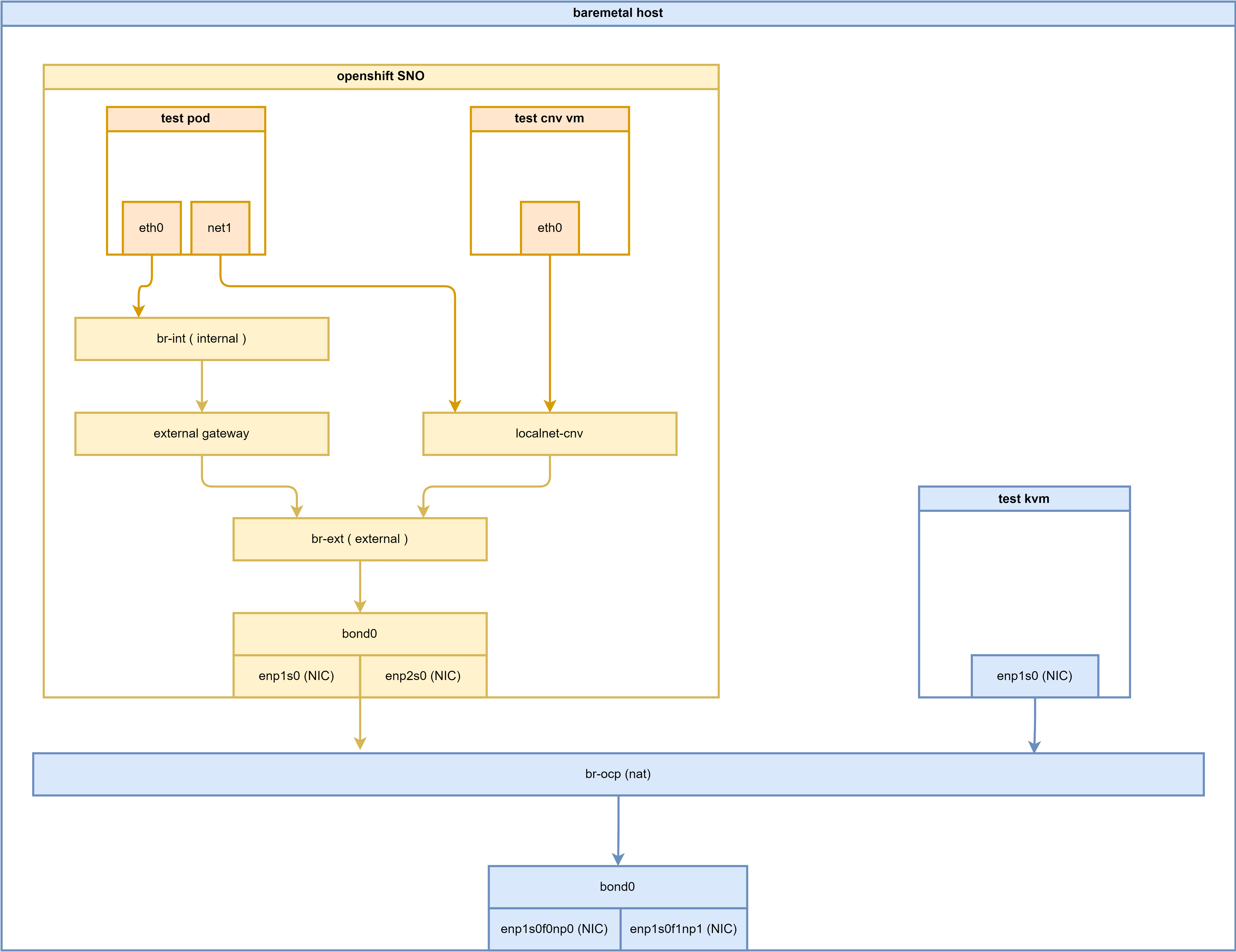

Below is the deployment architecture diagram for this experiment:

The solution describe here is supportd by BU, slack chat

Here is the reference document

ovn on 2nd network

Okay, let’s start installing OVN on the second network plane. There is comprehensive official documentation available that we can follow.

- https://docs.openshift.com/container-platform/4.16/networking/multiple_networks/configuring-additional-network.html#configuration-ovnk-additional-networks_configuring-additional-network

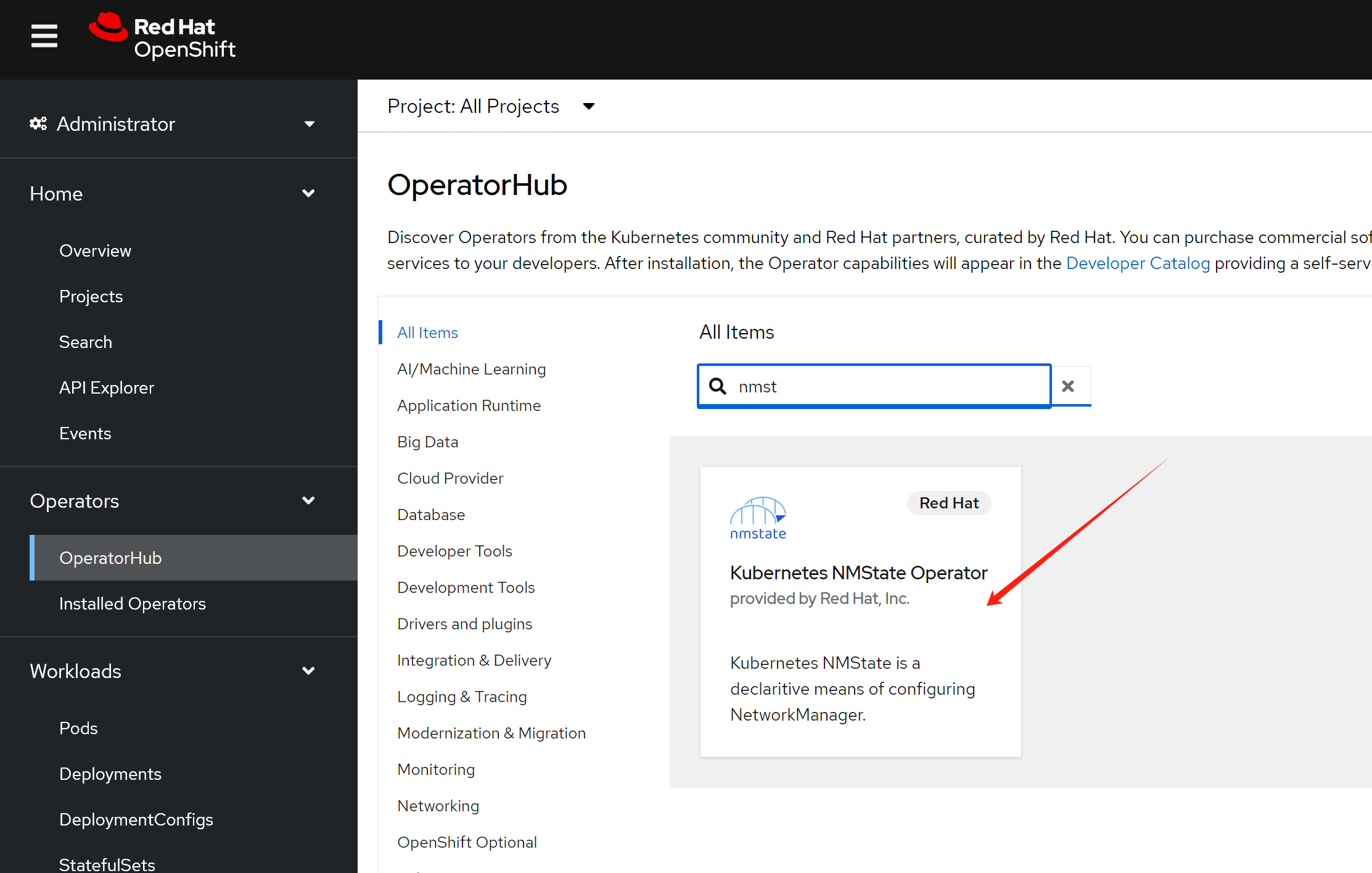

install NMState operator first

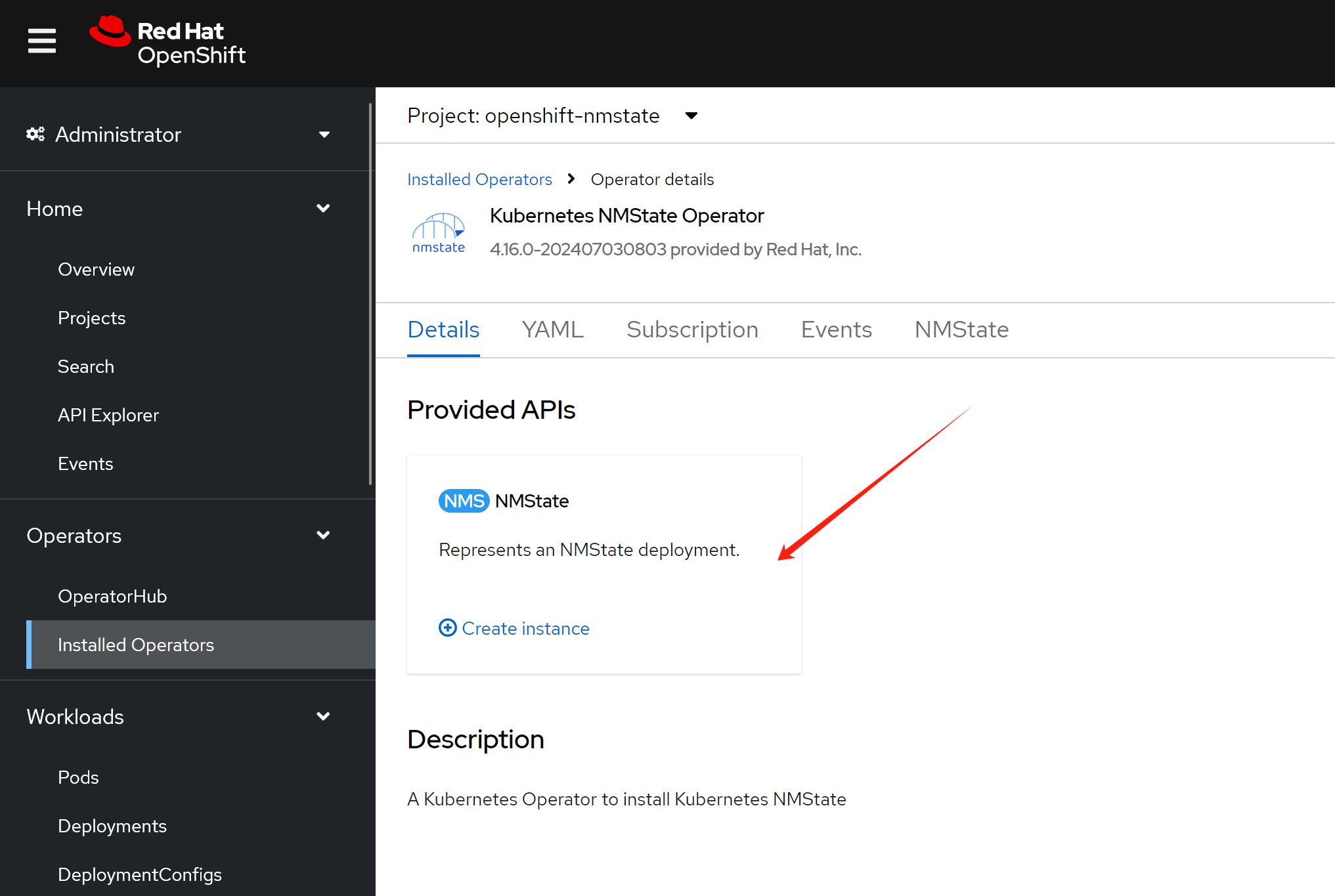

create a deployment with default setting.

To create a second network plane, we first need to consider whether to use an overlay or underlay. In the past, OpenShift only supported underlay network planes, such as macvlan. However, OpenShift now offers ovn, an overlay technology, as an option. In this case, we will use ovn to create the second overlay network plane.

When creating the ovn second network plane, there are two choices:

- Connect this network plane to the default ovn network plane and attach it to br-ex.

- Create another ovs bridge and attach it to the physical network, effectively separating it from the default ovn network plane.

This second ovn network plane is a Layer 2 network. We can choose to configure IPAM, which allows k8s/ocp to assign IP addresses to pods. However, our ultimate goal is the cnv scenario, in which IP addresses are configured on the VM or obtained through DHCP. Therefore, we will not configure IPAM for this ovn second network plane.

# create the mapping

oc delete -f ${BASE_DIR}/data/install/ovn-mapping.conf

cat << EOF > ${BASE_DIR}/data/install/ovn-mapping.conf

---

apiVersion: nmstate.io/v1

kind: NodeNetworkConfigurationPolicy

metadata:

name: mapping

spec:

nodeSelector:

node-role.kubernetes.io/worker: ''

desiredState:

ovn:

bridge-mappings:

- localnet: localnet-cnv

bridge: br-ex

state: present

EOF

oc apply -f ${BASE_DIR}/data/install/ovn-mapping.conf

# oc delete -f ${BASE_DIR}/data/install/ovn-mapping.conf

# cat << EOF > ${BASE_DIR}/data/install/ovn-mapping.conf

# ---

# apiVersion: nmstate.io/v1

# kind: NodeNetworkConfigurationPolicy

# metadata:

# name: mapping

# spec:

# nodeSelector:

# node-role.kubernetes.io/worker: ''

# desiredState:

# interfaces:

# - name: ovs-br-cnv

# description: |-

# A dedicated OVS bridge with eth1 as a port

# allowing all VLANs and untagged traffic

# type: ovs-bridge

# state: up

# bridge:

# options:

# stp: true

# port:

# - name: enp9s0

# ovn:

# bridge-mappings:

# - localnet: localnet-cnv

# bridge: ovs-br-cnv

# state: present

# EOF

# oc apply -f ${BASE_DIR}/data/install/ovn-mapping.conf

# create the network attachment definition

oc delete -f ${BASE_DIR}/data/install/ovn-k8s-cni-overlay.conf

var_namespace='llm-demo'

cat << EOF > ${BASE_DIR}/data/install/ovn-k8s-cni-overlay.conf

apiVersion: k8s.cni.cncf.io/v1

kind: NetworkAttachmentDefinition

metadata:

name: $var_namespace-localnet-network

namespace: $var_namespace

spec:

config: |-

{

"cniVersion": "0.3.1",

"name": "localnet-cnv",

"type": "ovn-k8s-cni-overlay",

"topology":"localnet",

"_subnets": "192.168.99.0/24",

"_vlanID": 33,

"_mtu": 1500,

"netAttachDefName": "$var_namespace/$var_namespace-localnet-network",

"_excludeSubnets": "10.100.200.0/29"

}

EOF

oc apply -f ${BASE_DIR}/data/install/ovn-k8s-cni-overlay.conf

try with pod

With the second network plane in place, we’ll start by testing network connectivity using pods. We test pods first because the VMs inside cnv/kubevirt run within pods. Testing the pod scenario makes the subsequent VM scenario much easier.

We’ll create three pods, each attached to both the default OVN network plane and the second OVN network plane. We’ll also use pod annotations to specify a second IP address for each pod.

Finally, we’ll test connectivity from the pods to various target IP addresses.

# create demo pods

oc delete -f ${BASE_DIR}/data/install/pod.yaml

var_namespace='llm-demo'

cat << EOF > ${BASE_DIR}/data/install/pod.yaml

---

apiVersion: v1

kind: Pod

metadata:

annotations:

k8s.v1.cni.cncf.io/networks: '[

{

"name": "$var_namespace-localnet-network",

"_mac": "02:03:04:05:06:07",

"_interface": "myiface1",

"ips": [

"192.168.77.91/24"

]

}

]'

name: tinypod

namespace: $var_namespace

labels:

app: tinypod

spec:

containers:

- image: quay.io/wangzheng422/qimgs:rocky9-test-2024.06.17.v01

imagePullPolicy: IfNotPresent

name: agnhost-container

command: [ "/bin/bash", "-c", "--" ]

args: [ "tail -f /dev/null" ]

---

apiVersion: v1

kind: Pod

metadata:

annotations:

k8s.v1.cni.cncf.io/networks: '[

{

"name": "$var_namespace-localnet-network",

"_mac": "02:03:04:05:06:07",

"_interface": "myiface1",

"ips": [

"192.168.77.92/24"

]

}

]'

name: tinypod-01

namespace: $var_namespace

labels:

app: tinypod-01

spec:

containers:

- image: quay.io/wangzheng422/qimgs:rocky9-test-2024.06.17.v01

imagePullPolicy: IfNotPresent

name: agnhost-container

command: [ "/bin/bash", "-c", "--" ]

args: [ "tail -f /dev/null" ]

---

apiVersion: v1

kind: Pod

metadata:

annotations:

k8s.v1.cni.cncf.io/networks: '[

{

"name": "$var_namespace-localnet-network",

"_mac": "02:03:04:05:06:07",

"_interface": "myiface1",

"ips": [

"192.168.77.93/24"

]

}

]'

name: tinypod-02

namespace: $var_namespace

labels:

app: tinypod-02

spec:

containers:

- image: quay.io/wangzheng422/qimgs:rocky9-test-2024.06.17.v01

imagePullPolicy: IfNotPresent

name: agnhost-container

command: [ "/bin/bash", "-c", "--" ]

args: [ "tail -f /dev/null" ]

EOF

oc apply -f ${BASE_DIR}/data/install/pod.yaml

# testing with ping to another pod

oc exec -it tinypod -- ping 192.168.77.92

# PING 192.168.77.92 (192.168.77.92) 56(84) bytes of data.

# 64 bytes from 192.168.77.92: icmp_seq=1 ttl=64 time=0.411 ms

# 64 bytes from 192.168.77.92: icmp_seq=2 ttl=64 time=0.114 ms

# ....

# testing with ping to another vm

oc exec -it tinypod -- ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# 64 bytes from 192.168.77.10: icmp_seq=1 ttl=64 time=1.09 ms

# 64 bytes from 192.168.77.10: icmp_seq=2 ttl=64 time=0.310 ms

# ....

# ping to outside world through default network

oc exec -it tinypod -- ping 8.8.8.8

# PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

# 64 bytes from 8.8.8.8: icmp_seq=1 ttl=114 time=1.26 ms

# 64 bytes from 8.8.8.8: icmp_seq=2 ttl=114 time=0.795 ms

# ......

# trace the path to 8.8.8.8, we can see it goes through default network

oc exec -it tinypod -- tracepath -4 -n 8.8.8.8

# 1?: [LOCALHOST] pmtu 1400

# 1: 8.8.8.8 0.772ms asymm 2

# 1: 8.8.8.8 0.328ms asymm 2

# 2: 100.64.0.2 0.518ms asymm 3

# 3: 192.168.99.1 0.758ms

# 4: 169.254.77.1 0.605ms

# 5: 10.253.38.104 0.561ms

# 6: 10.253.37.232 0.563ms

# 7: 10.253.37.194 0.732ms asymm 8

# 8: 147.28.130.14 0.983ms

# 9: 198.16.4.121 0.919ms asymm 13

# 10: no reply

# ....

oc exec -it tinypod -- ip a

# 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

# link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

# inet 127.0.0.1/8 scope host lo

# valid_lft forever preferred_lft forever

# inet6 ::1/128 scope host

# valid_lft forever preferred_lft forever

# 2: eth0@if116: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default

# link/ether 0a:58:0a:84:00:65 brd ff:ff:ff:ff:ff:ff link-netnsid 0

# inet 10.132.0.101/23 brd 10.132.1.255 scope global eth0

# valid_lft forever preferred_lft forever

# inet6 fe80::858:aff:fe84:65/64 scope link

# valid_lft forever preferred_lft forever

# 3: net1@if118: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default

# link/ether 0a:58:c0:a8:4d:5b brd ff:ff:ff:ff:ff:ff link-netnsid 0

# inet 192.168.77.91/24 brd 192.168.77.255 scope global net1

# valid_lft forever preferred_lft forever

# inet6 fe80::858:c0ff:fea8:4d5b/64 scope link

# valid_lft forever preferred_lft forever

oc exec -it tinypod -- ip r

# default via 10.132.0.1 dev eth0

# 10.132.0.0/23 dev eth0 proto kernel scope link src 10.132.0.101

# 10.132.0.0/14 via 10.132.0.1 dev eth0

# 100.64.0.0/16 via 10.132.0.1 dev eth0

# 172.22.0.0/16 via 10.132.0.1 dev eth0

# 192.168.77.0/24 dev net1 proto kernel scope link src 192.168.77.91try with multi-network policy

In real-world customer scenarios, the goal is to control network traffic flowing in and out on the second network plane, ensuring security. Here, we can use multi-network policy to fulfill this requirement. Multi-network policy shares the same syntax as network policy, but the difference lies in specifying the effective network plane.

We first use a default rule to deny all incoming and outgoing traffic. Then, we add rules to allow specific traffic. As our configured network lacks IPAM settings, Kubernetes cannot determine the IP addresses of pods on the second network plane. Therefore, we can only restrict incoming and outgoing external targets using IP addresses, not labels.

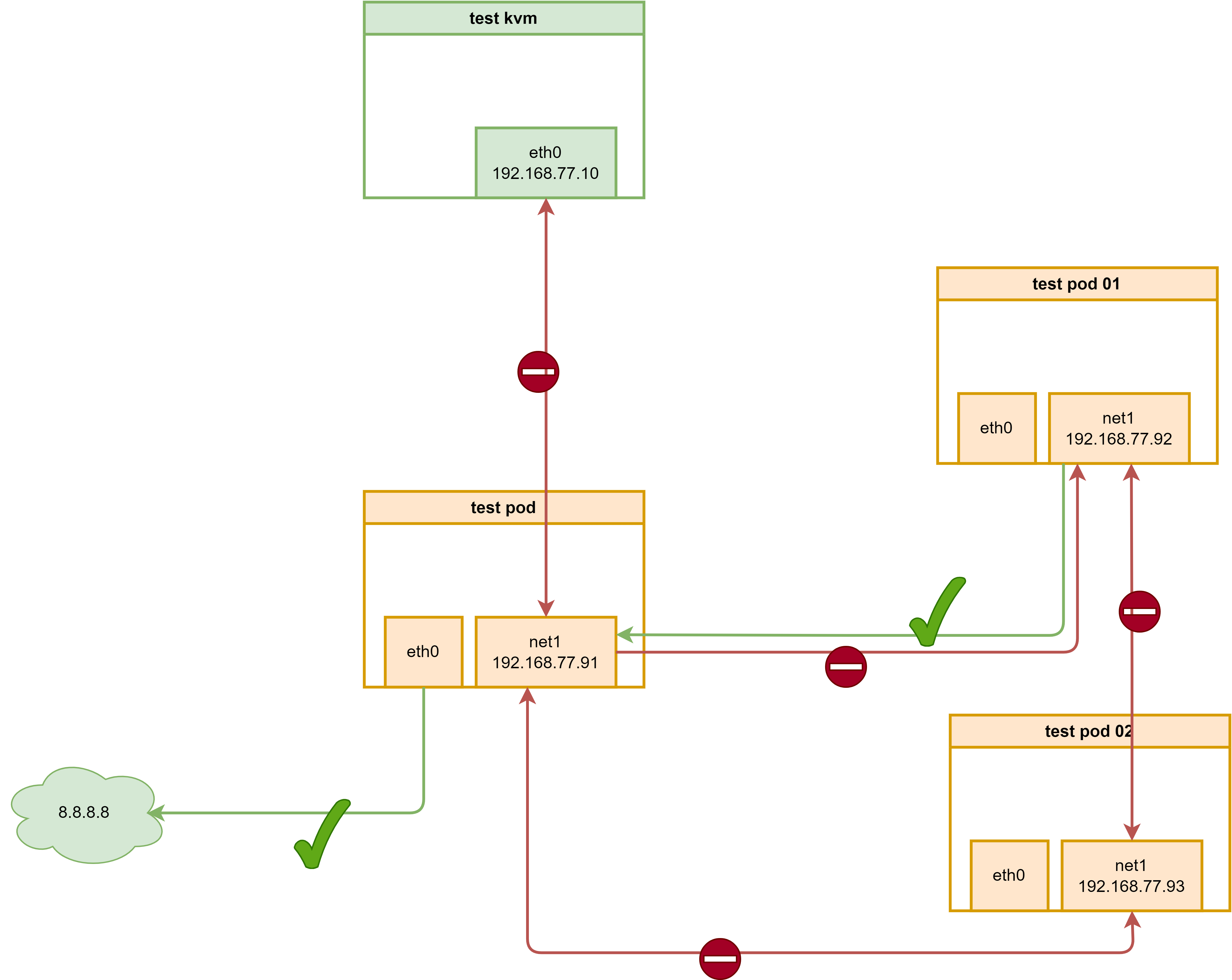

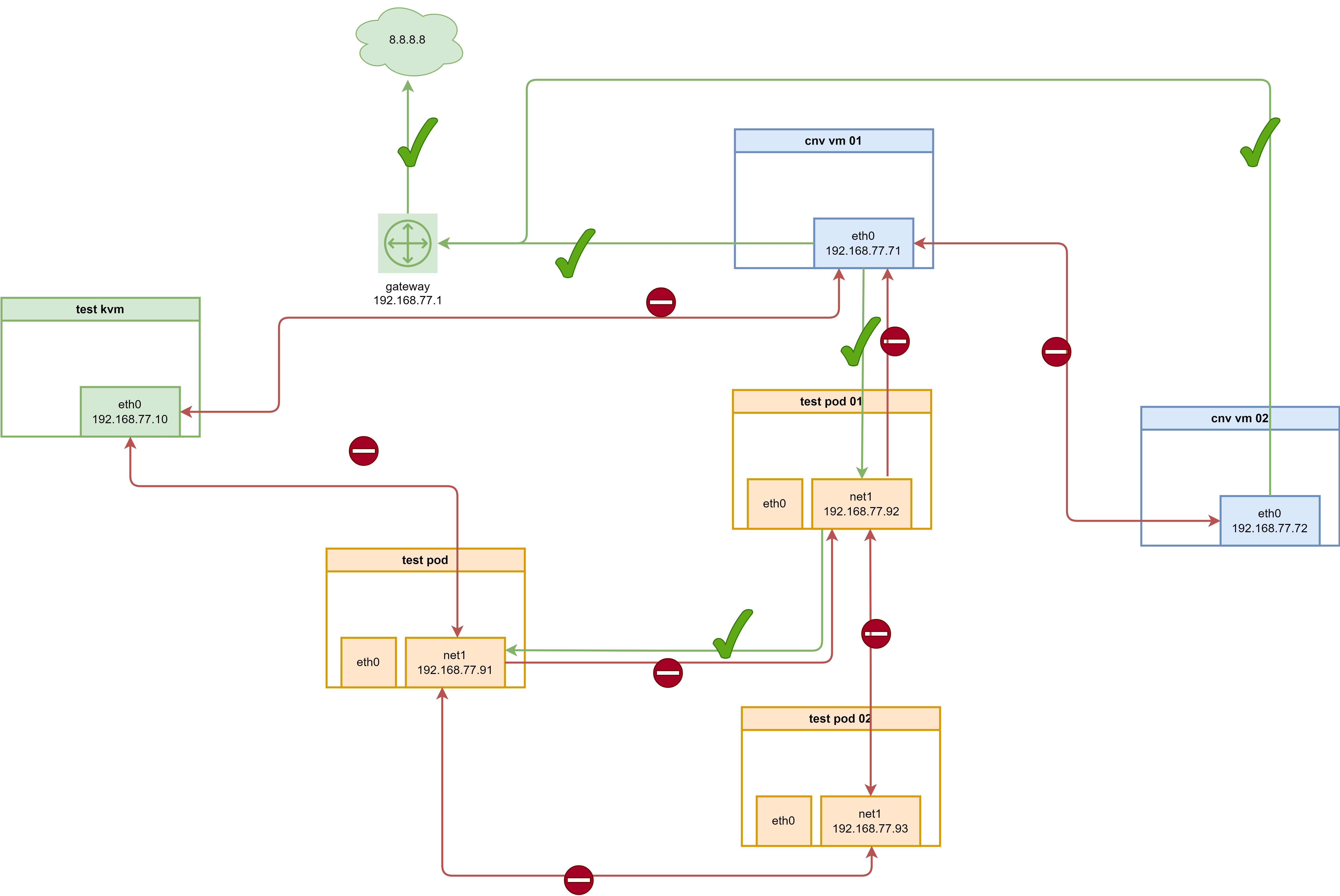

The network rules defined in this document are illustrated in the following logical diagram:

Currently, multi-network policy is not supported by AdminNetworkPolicy.

- https://redhat-internal.slack.com/archives/CDCP2LA9L/p1719501818805819

offical doc:

- https://docs.openshift.com/container-platform/4.15/networking/multiple_networks/configuring-multi-network-policy.html#nw-multi-network-policy-enable_configuring-multi-network-policy

# enable multi-network policy in cluster level

cat << EOF > ${BASE_DIR}/data/install/multi-network-policy.yaml

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

useMultiNetworkPolicy: true

EOF

oc patch network.operator.openshift.io cluster --type=merge --patch-file=${BASE_DIR}/data/install/multi-network-policy.yaml

# if you want to revert back

cat << EOF > ${BASE_DIR}/data/install/multi-network-policy.yaml

apiVersion: operator.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

useMultiNetworkPolicy: false

EOF

oc patch network.operator.openshift.io cluster --type=merge --patch-file=${BASE_DIR}/data/install/multi-network-policy.yaml

# below is add by default

# cat << EOF > ${BASE_DIR}/data/install/multi-network-policy-rules.yaml

# kind: ConfigMap

# apiVersion: v1

# metadata:

# name: multi-networkpolicy-custom-rules

# namespace: openshift-multus

# data:

# custom-v6-rules.txt: |

# # accept NDP

# -p icmpv6 --icmpv6-type neighbor-solicitation -j ACCEPT

# -p icmpv6 --icmpv6-type neighbor-advertisement -j ACCEPT

# # accept RA/RS

# -p icmpv6 --icmpv6-type router-solicitation -j ACCEPT

# -p icmpv6 --icmpv6-type router-advertisement -j ACCEPT

# EOF

# oc delete -f ${BASE_DIR}/data/install/multi-network-policy-rules.yaml

# oc apply -f ${BASE_DIR}/data/install/multi-network-policy-rules.yaml

# deny all by default

oc delete -f ${BASE_DIR}/data/install/multi-network-policy-deny-all.yaml

var_namespace='llm-demo'

cat << EOF > ${BASE_DIR}/data/install/multi-network-policy-deny-all.yaml

---

apiVersion: k8s.cni.cncf.io/v1beta1

kind: MultiNetworkPolicy

metadata:

name: deny-by-default

namespace: $var_namespace

annotations:

k8s.v1.cni.cncf.io/policy-for: $var_namespace/$var_namespace-localnet-network

spec:

podSelector: {}

policyTypes:

- Ingress

- Egress

ingress: []

egress: []

# do not work, as no cidr defined

# ---

# apiVersion: k8s.cni.cncf.io/v1beta1

# kind: MultiNetworkPolicy

# metadata:

# name: deny-by-default

# namespace: default

# annotations:

# k8s.v1.cni.cncf.io/policy-for: $var_namespace/$var_namespace-localnet-network

# spec:

# podSelector: {}

# policyTypes:

# - Ingress

# - Egress

# ingress:

# - from:

# - ipBlock:

# except: '0.0.0.0/0

# egress:

# - to:

# - ipBlock:

# except: '0.0.0.0/0'

EOF

oc apply -f ${BASE_DIR}/data/install/multi-network-policy-deny-all.yaml

# get pod ip of tinypod-01

ANOTHER_TINYPOD_IP=$(oc get pod tinypod-01 -o=jsonpath='{.status.podIP}')

echo $ANOTHER_TINYPOD_IP

# 10.132.0.40

# testing with ping to another pod using default network eth0

oc exec -it tinypod -- ping $ANOTHER_TINYPOD_IP

# PING 10.132.0.40 (10.132.0.40) 56(84) bytes of data.

# 64 bytes from 10.132.0.40: icmp_seq=1 ttl=64 time=0.806 ms

# 64 bytes from 10.132.0.40: icmp_seq=2 ttl=64 time=0.250 ms

# ......

# testing with ping to another pod using 2nd network net1

oc exec -it tinypod -- ping 192.168.77.92

# PING 192.168.77.92 (192.168.77.92) 56(84) bytes of data.

# ^C

# --- 192.168.77.92 ping statistics ---

# 30 packets transmitted, 0 received, 100% packet loss, time 29721ms

# testing with ping to another vm

# notice, here we can not ping the vm

oc exec -it tinypod -- ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# ^C

# --- 192.168.77.10 ping statistics ---

# 4 packets transmitted, 0 received, 100% packet loss, time 3091ms

# it still can ping to outside world through default network

oc exec -it tinypod -- ping 8.8.8.8

# PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

# 64 bytes from 8.8.8.8: icmp_seq=1 ttl=114 time=1.69 ms

# 64 bytes from 8.8.8.8: icmp_seq=2 ttl=114 time=1.27 ms

# .....

# get pod ip of tinypod

ANOTHER_TINYPOD_IP=$(oc get pod tinypod -o=jsonpath='{.status.podIP}')

echo $ANOTHER_TINYPOD_IP

# 10.132.0.39

# testing with ping to another pod using default network eth0

oc exec -it tinypod-01 -- ping $ANOTHER_TINYPOD_IP

# PING 10.132.0.39 (10.132.0.39) 56(84) bytes of data.

# 64 bytes from 10.132.0.39: icmp_seq=1 ttl=64 time=0.959 ms

# 64 bytes from 10.132.0.39: icmp_seq=2 ttl=64 time=0.594 ms

# ......

# testing with ping to another pod using 2nd network net1

oc exec -it tinypod-01 -- ping 192.168.77.91

# PING 192.168.77.91 (192.168.77.91) 56(84) bytes of data.

# ^C

# --- 192.168.77.91 ping statistics ---

# 30 packets transmitted, 0 received, 100% packet loss, time 29707ms

# allow traffic only between tinypod and tinypod-01

oc delete -f ${BASE_DIR}/data/install/multi-network-policy-allow-some.yaml

var_namespace='llm-demo'

cat << EOF > ${BASE_DIR}/data/install/multi-network-policy-allow-some.yaml

# ---

# apiVersion: k8s.cni.cncf.io/v1beta1

# kind: MultiNetworkPolicy

# metadata:

# name: allow-specific-pods

# namespace: $var_namespace

# annotations:

# k8s.v1.cni.cncf.io/policy-for: $var_namespace-localnet-network

# spec:

# podSelector:

# matchLabels:

# app: tinypod

# policyTypes:

# - Ingress

# ingress:

# - from:

# - podSelector:

# matchLabels:

# app: tinypod-01

---

apiVersion: k8s.cni.cncf.io/v1beta1

kind: MultiNetworkPolicy

metadata:

name: allow-ipblock

namespace: $var_namespace

annotations:

k8s.v1.cni.cncf.io/policy-for: $var_namespace-localnet-network

spec:

podSelector:

matchLabels:

app: tinypod

policyTypes:

- Ingress

# - Egress

ingress:

- from:

- ipBlock:

cidr: 192.168.77.92/32

# egress:

# - to:

# - ipBlock:

# cidr: 192.168.77.92/32

---

apiVersion: k8s.cni.cncf.io/v1beta1

kind: MultiNetworkPolicy

metadata:

name: allow-ipblock-01

namespace: $var_namespace

annotations:

k8s.v1.cni.cncf.io/policy-for: $var_namespace-localnet-network

spec:

podSelector:

matchLabels:

app: tinypod-01

policyTypes:

# - Ingress

- Egress

# ingress:

# - from:

# - ipBlock:

# cidr: 192.168.77.91/32

egress:

- to:

- ipBlock:

cidr: 192.168.77.91/32

EOF

oc apply -f ${BASE_DIR}/data/install/multi-network-policy-allow-some.yaml

# get pod ip of tinypod-01

ANOTHER_TINYPOD_IP=$(oc get pod tinypod-01 -o=jsonpath='{.status.podIP}')

echo $ANOTHER_TINYPOD_IP

# 10.132.0.40

# testing with ping to another pod using default network eth0

oc exec -it tinypod -- ping $ANOTHER_TINYPOD_IP

# PING 10.132.0.40 (10.132.0.40) 56(84) bytes of data.

# 64 bytes from 10.132.0.40: icmp_seq=1 ttl=64 time=0.806 ms

# 64 bytes from 10.132.0.40: icmp_seq=2 ttl=64 time=0.250 ms

# ......

# testing with ping to another pod using 2nd network net1

oc exec -it tinypod -- ping 192.168.77.92

# PING 192.168.77.92 (192.168.77.92) 56(84) bytes of data.

# ^C

# --- 192.168.77.92 ping statistics ---

# 30 packets transmitted, 0 received, 100% packet loss, time 29721ms

oc exec -it tinypod -- ping 192.168.77.93

# PING 192.168.77.93 (192.168.77.93) 56(84) bytes of data.

# ^C

# --- 192.168.77.93 ping statistics ---

# 4 packets transmitted, 0 received, 100% packet loss, time 3065ms

# testing with ping to another vm

# if we do not set egress rule for default-deny-all, and allow-some, here we can ping the vm

oc exec -it tinypod -- ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# 64 bytes from 192.168.77.10: icmp_seq=1 ttl=64 time=0.672 ms

# 64 bytes from 192.168.77.10: icmp_seq=2 ttl=64 time=0.674 ms

# ......

# but we set the egress rule, so we can not ping vm now

oc exec -it tinypod -- ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# ^C

# --- 192.168.77.10 ping statistics ---

# 3 packets transmitted, 0 received, 100% packet loss, time 2085ms

# it still can ping to outside world through default network

oc exec -it tinypod -- ping 8.8.8.8

# PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

# 64 bytes from 8.8.8.8: icmp_seq=1 ttl=114 time=1.69 ms

# 64 bytes from 8.8.8.8: icmp_seq=2 ttl=114 time=1.27 ms

# .....

# get pod ip of tinypod

ANOTHER_TINYPOD_IP=$(oc get pod tinypod -o=jsonpath='{.status.podIP}')

echo $ANOTHER_TINYPOD_IP

# 10.132.0.39

# testing with ping to another pod using default network eth0

oc exec -it tinypod-01 -- ping $ANOTHER_TINYPOD_IP

# PING 10.132.0.39 (10.132.0.39) 56(84) bytes of data.

# 64 bytes from 10.132.0.39: icmp_seq=1 ttl=64 time=0.959 ms

# 64 bytes from 10.132.0.39: icmp_seq=2 ttl=64 time=0.594 ms

# ......

# testing with ping to another pod using 2nd network net1

# you can see, we can ping to tinypod, which is allowed by multi-network policy

oc exec -it tinypod-01 -- ping 192.168.77.91

# PING 192.168.77.91 (192.168.77.91) 56(84) bytes of data.

# 64 bytes from 192.168.77.91: icmp_seq=1 ttl=64 time=0.278 ms

# 64 bytes from 192.168.77.91: icmp_seq=2 ttl=64 time=0.032 ms

# ....

oc exec -it tinypod-01 -- ping 192.168.77.93

# PING 192.168.77.93 (192.168.77.93) 56(84) bytes of data.

# ^C

# --- 192.168.77.93 ping statistics ---

# 4 packets transmitted, 0 received, 100% packet loss, time 3085ms

# testing with ping to vm

oc exec -it tinypod-01 -- ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# ^C

# --- 192.168.77.10 ping statistics ---

# 3 packets transmitted, 0 received, 100% packet loss, time 2085ms

# it still can ping to outside world through default network

oc exec -it tinypod-01 -- ping 8.8.8.8

# PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

# 64 bytes from 8.8.8.8: icmp_seq=1 ttl=114 time=1.15 ms

# 64 bytes from 8.8.8.8: icmp_seq=2 ttl=114 time=0.824 ms

# ......

oc exec -it tinypod-02 -- ping 192.168.77.91

# PING 192.168.77.91 (192.168.77.91) 56(84) bytes of data.

# ^C

# --- 192.168.77.91 ping statistics ---

# 4 packets transmitted, 0 received, 100% packet loss, time 3089ms

oc exec -it tinypod-02 -- ping 192.168.77.92

# PING 192.168.77.92 (192.168.77.92) 56(84) bytes of data.

# ^C

# --- 192.168.77.92 ping statistics ---

# 4 packets transmitted, 0 received, 100% packet loss, time 3069ms

oc exec -it tinypod-02 -- ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# ^C

# --- 192.168.77.10 ping statistics ---

# 3 packets transmitted, 0 received, 100% packet loss, time 2067ms

oc get multi-networkpolicies -A

# NAMESPACE NAME AGE

# llm-demo allow-ipblock 74m

# llm-demo allow-ipblock-01 74m

# llm-demo deny-by-default 82m

Our ovn on 2nd network do not have ipam, so ingress with pod selector is not working, see log from project: openshift-ovn-kubernetes -> pod: ovnkube-node -> container ovnkube-controller. This is why we use ipblock to allow traffic between pods.

I0718 13:03:32.619246 7659 obj_retry.go:346] Retry delete failed for *v1beta1.MultiNetworkPolicy llm-demo/allow-specific-pods, will try again later: invalid ingress peer {&LabelSelector{MatchLabels:map[string]string{app: tinypod-01,},MatchExpressions:[]LabelSelectorRequirement{},} nil

} in multi-network policy allow-specific-pods; IPAM-less networks can only have ipBlockpeers

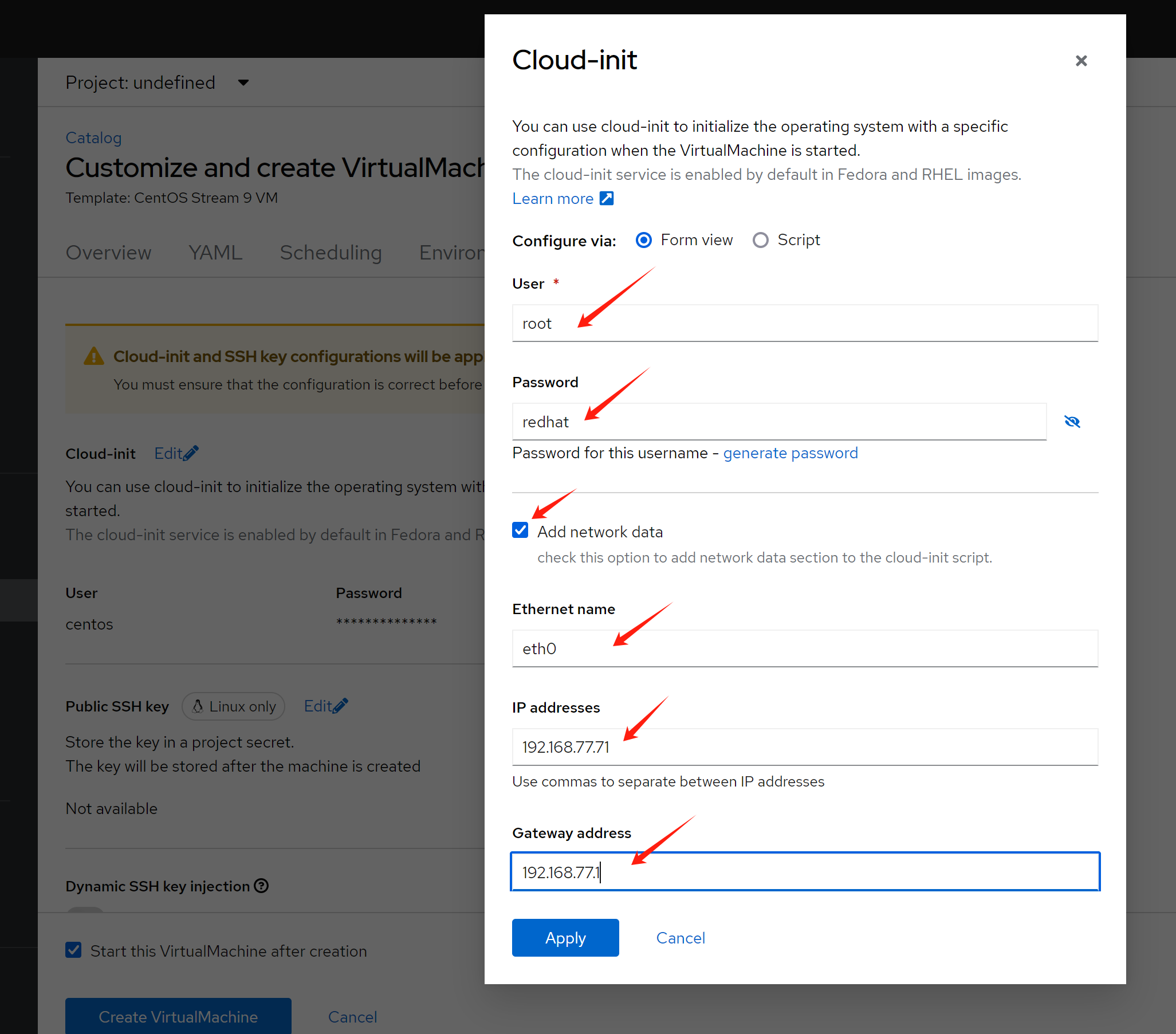

try with cnv

[!NOTE] CNV use case will not work if the underlying network is not allowed multi-mac on single port.

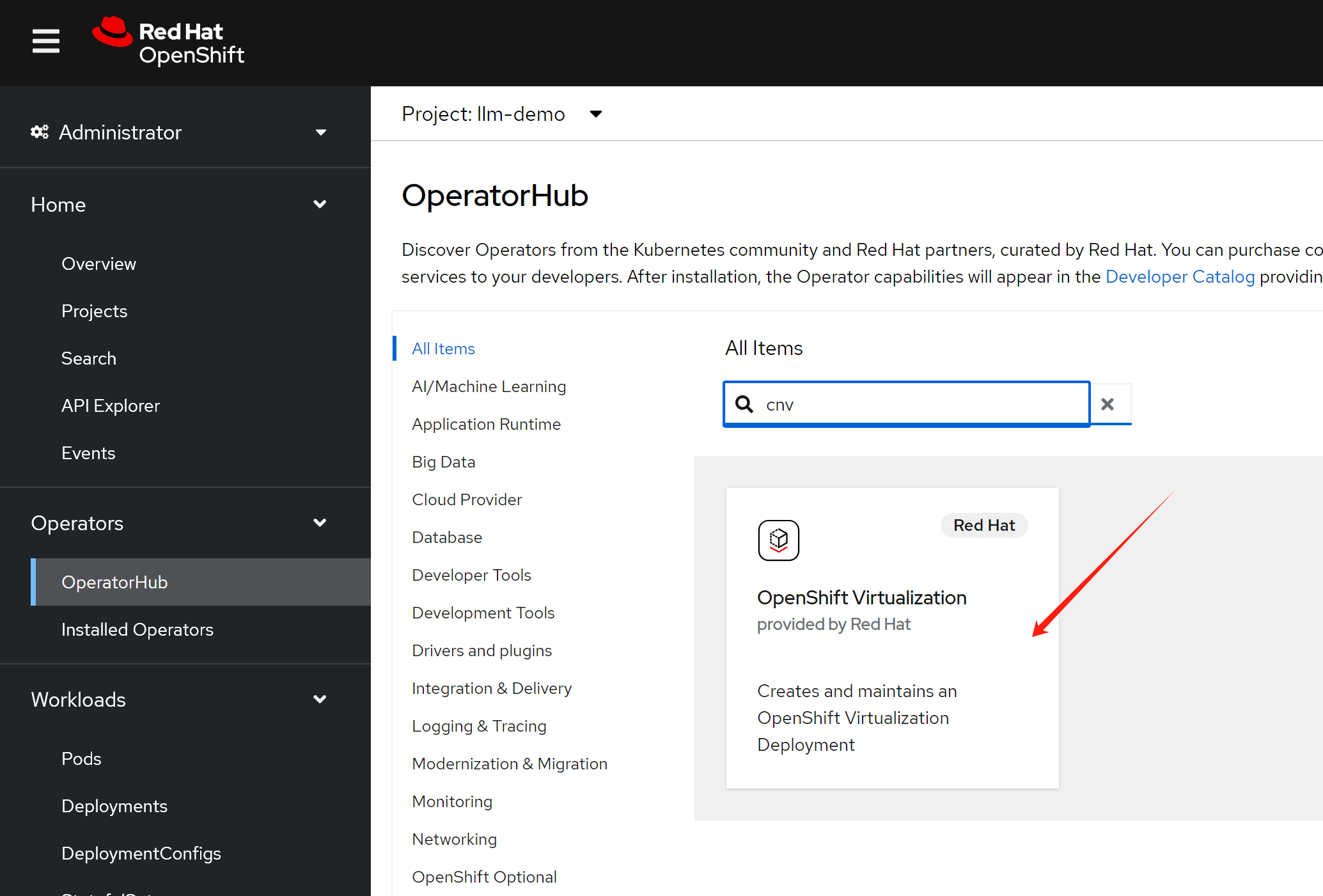

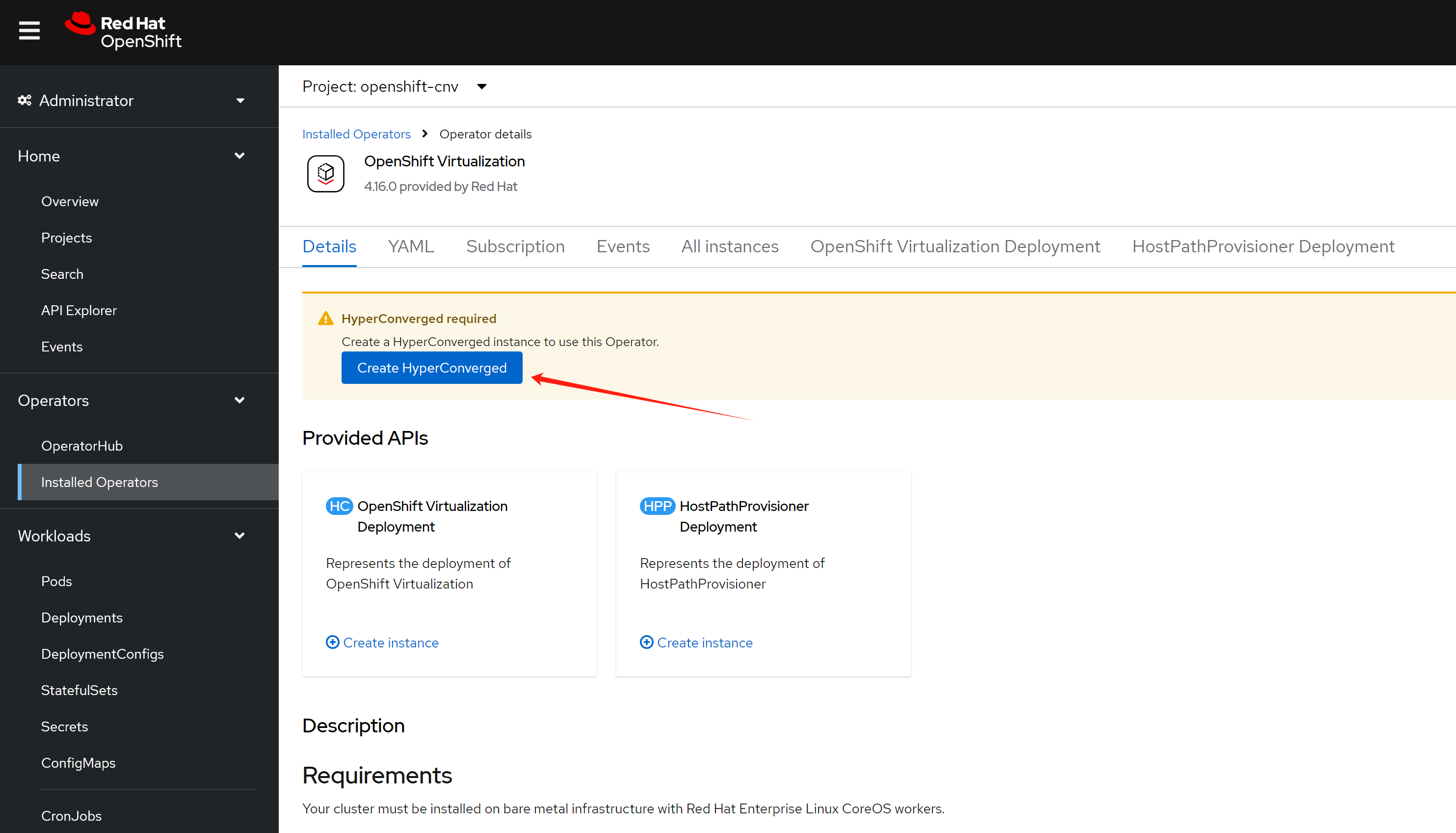

first, we need to install cnv operator

create default instance with default settings.

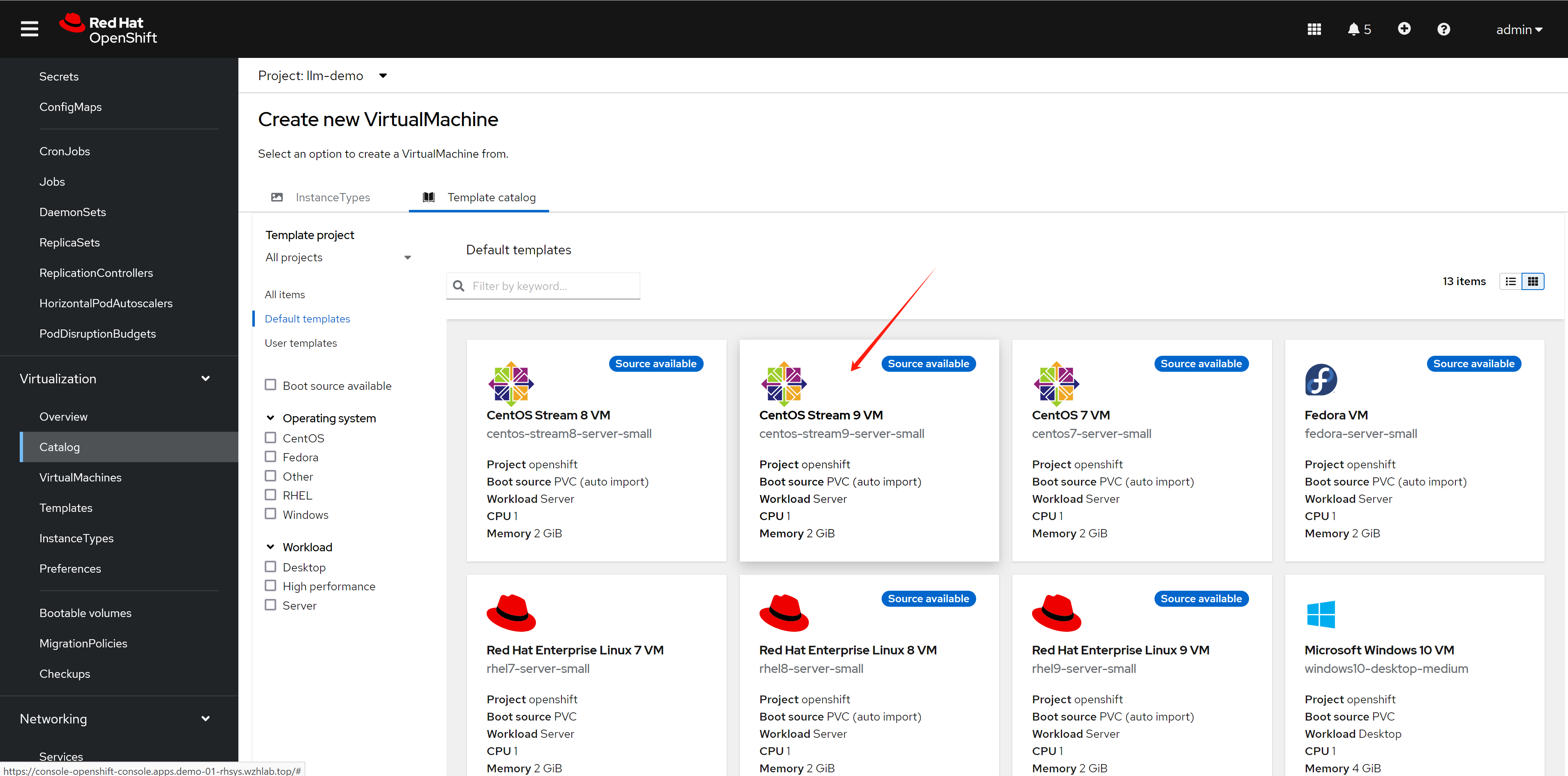

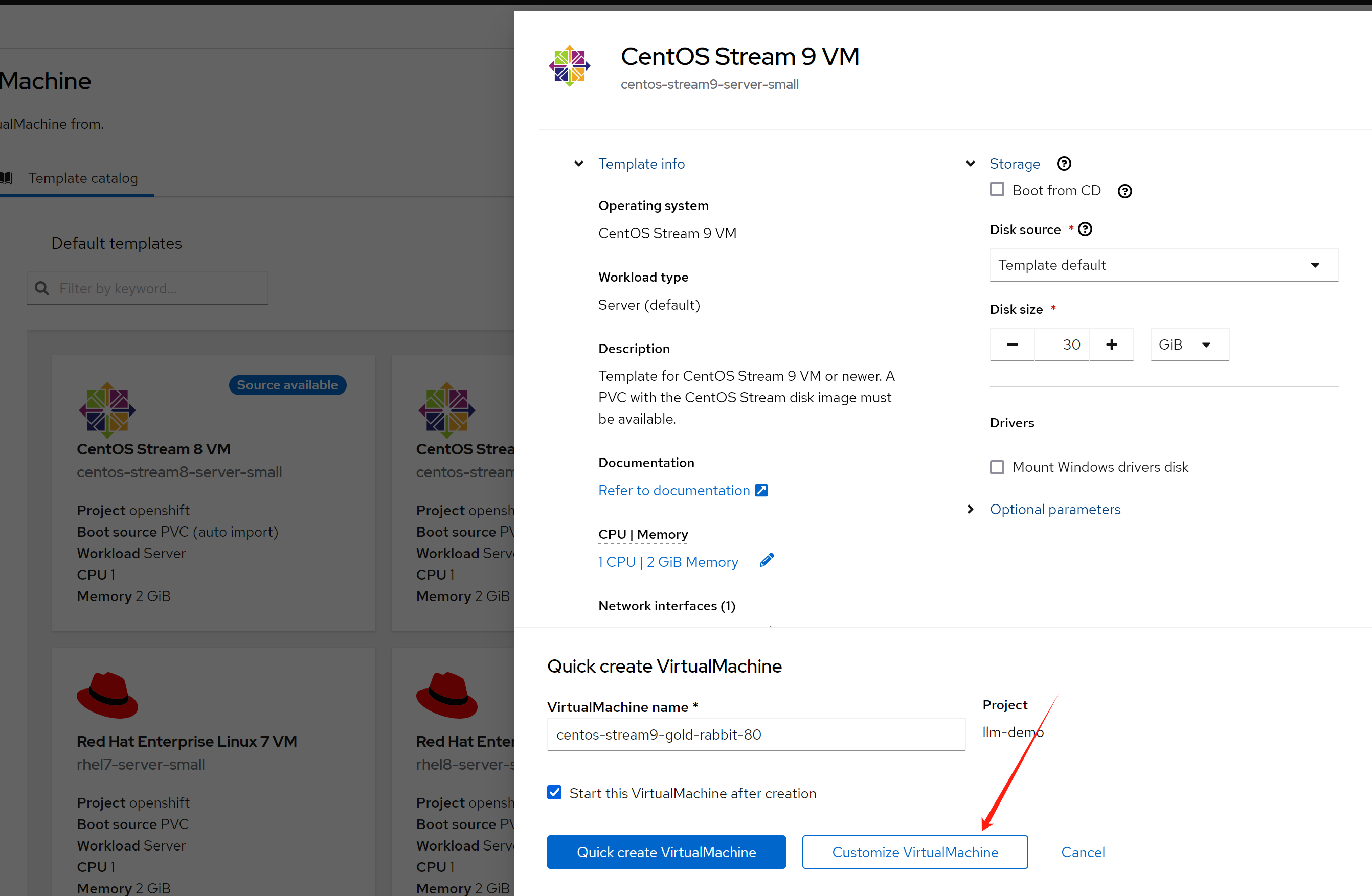

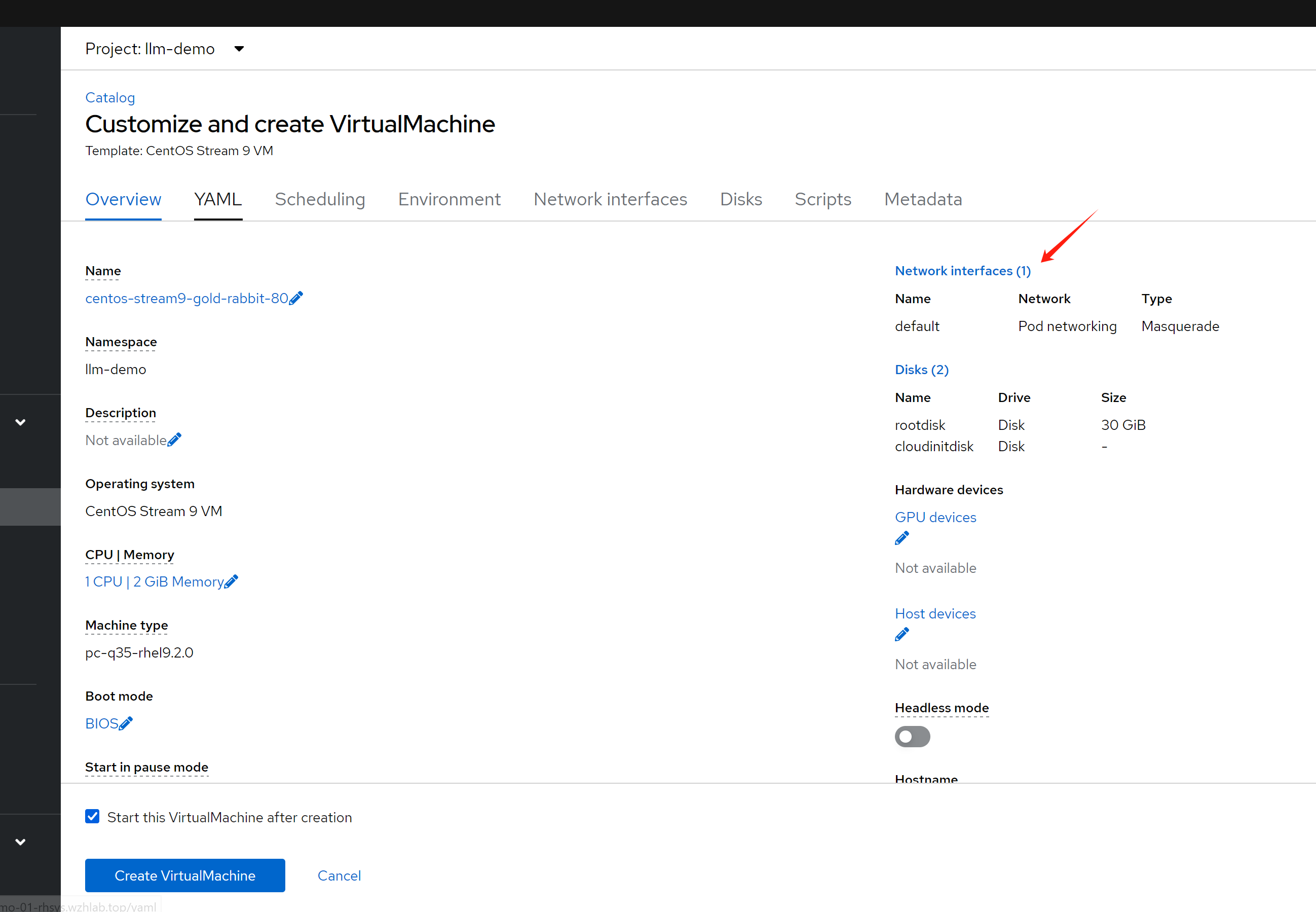

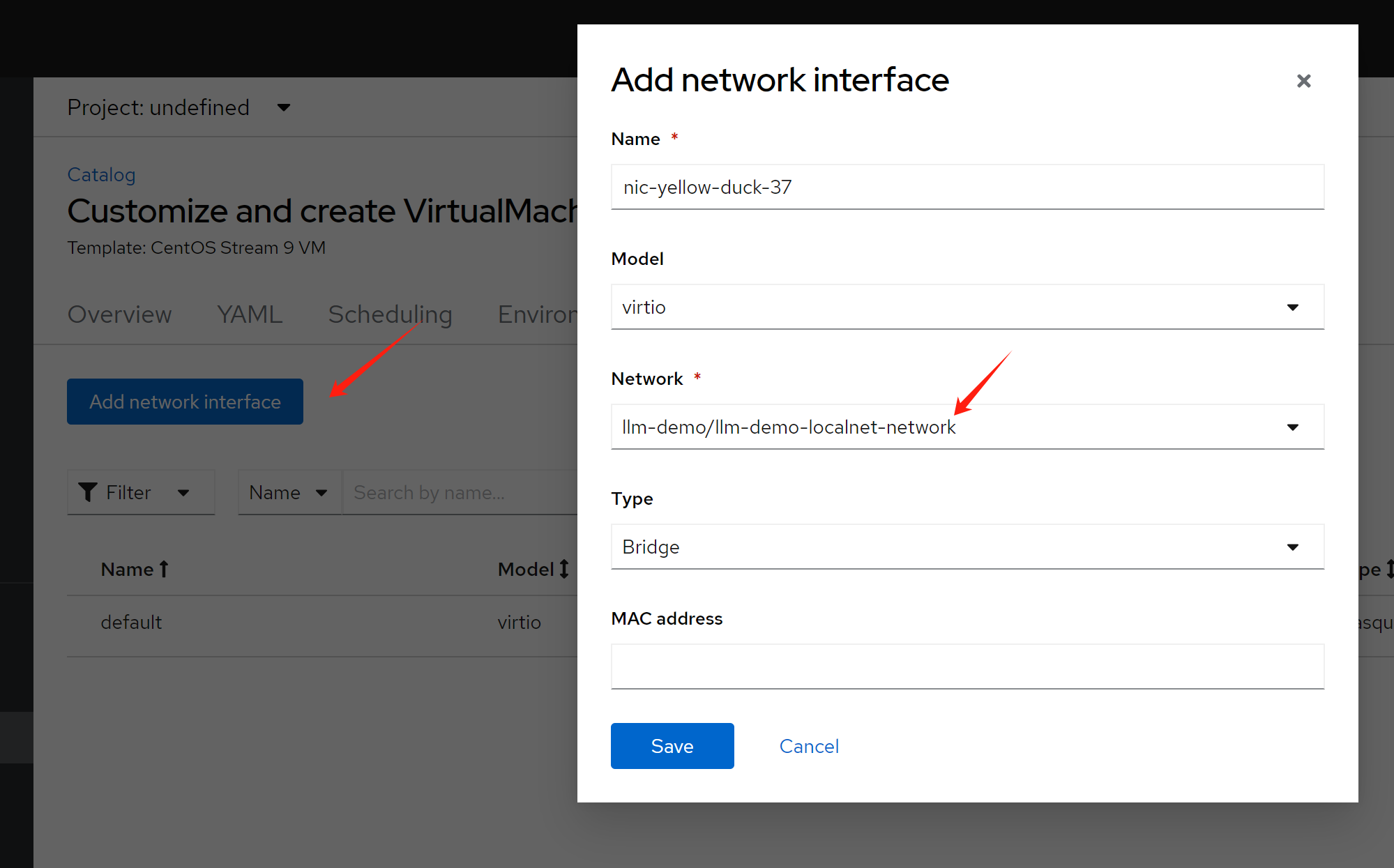

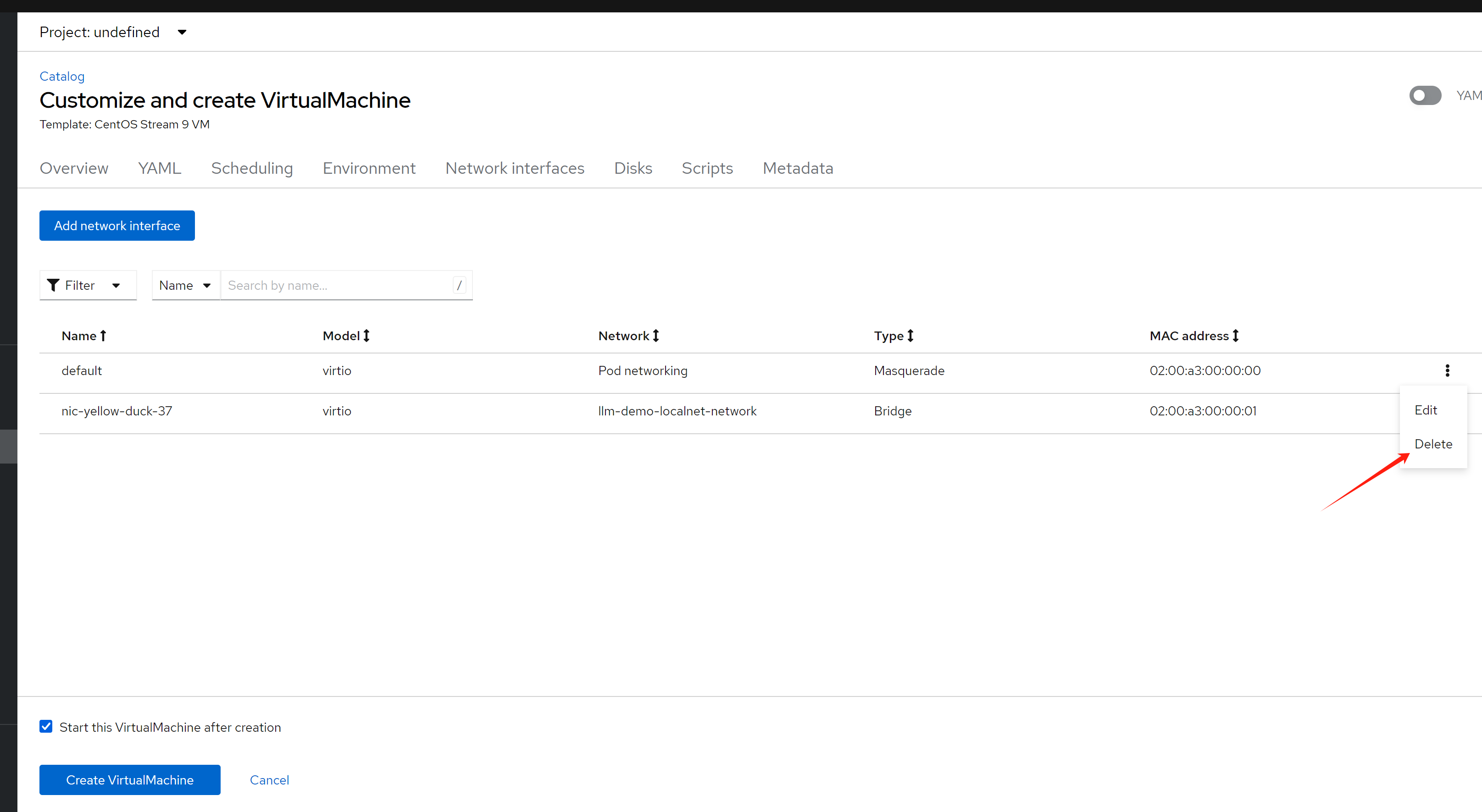

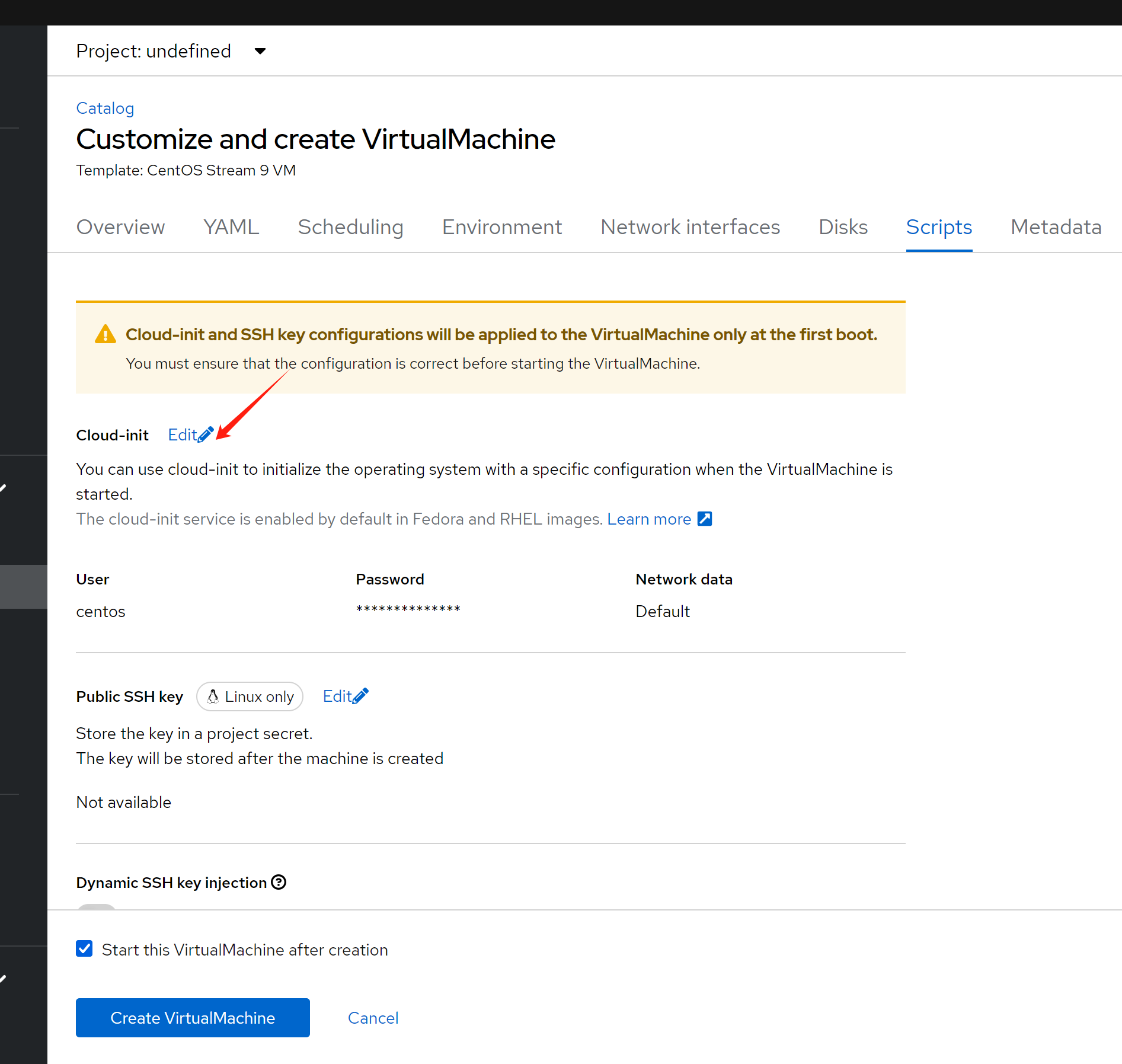

Wait some time, the cnv will download os base image. After that, we create vm

Create vm with centos stream9 from template catalog

In the beginning, the vm can not ping to any ip, and can not be ping from any ip. After apply additional network policy, the vm can ping to gateway, and the test pod, and outside world.

The overall network policy is like below:

# get vm

oc get vm

# NAME AGE STATUS READY

# centos-stream9-gold-rabbit-80 2d17h Running True

# centos-stream9-green-ferret-41 54s Running True

# allow traffic only between tinypod and tinypod-01

oc delete -f ${BASE_DIR}/data/install/multi-network-policy-allow-some-cnv.yaml

var_namespace='llm-demo'

var_vm='centos-stream9-gold-rabbit-80'

var_vm_01='centos-stream9-green-ferret-41'

cat << EOF > ${BASE_DIR}/data/install/multi-network-policy-allow-some-cnv.yaml

---

apiVersion: k8s.cni.cncf.io/v1beta1

kind: MultiNetworkPolicy

metadata:

name: allow-ipblock-cnv-01

namespace: $var_namespace

annotations:

k8s.v1.cni.cncf.io/policy-for: $var_namespace-localnet-network

spec:

podSelector:

matchLabels:

vm.kubevirt.io/name: $var_vm

policyTypes:

- Ingress

- Egress

ingress:

- from:

# from gateway

- ipBlock:

cidr: 192.168.77.1/32

# from test pod

- ipBlock:

cidr: 192.168.77.92/32

egress:

- to:

# can go anywhere on the internet, except the ips in the same network

- ipBlock:

cidr: 0.0.0.0/0

except:

- 192.168.77.0/24

# to gateway

- ipBlock:

cidr: 192.168.77.1/32

# to test pod

- ipBlock:

cidr: 192.168.77.92/32

---

apiVersion: k8s.cni.cncf.io/v1beta1

kind: MultiNetworkPolicy

metadata:

name: allow-ipblock-cnv-02

namespace: $var_namespace

annotations:

k8s.v1.cni.cncf.io/policy-for: $var_namespace-localnet-network

spec:

podSelector:

matchLabels:

vm.kubevirt.io/name: $var_vm_01

policyTypes:

- Ingress

- Egress

ingress:

- from:

# from gateway

- ipBlock:

cidr: 192.168.77.1/32

egress:

- to:

# to gateway

- ipBlock:

cidr: 192.168.77.1/32

---

apiVersion: k8s.cni.cncf.io/v1beta1

kind: MultiNetworkPolicy

metadata:

name: allow-ipblock-cnv-03

namespace: $var_namespace

annotations:

k8s.v1.cni.cncf.io/policy-for: $var_namespace-localnet-network

spec:

podSelector:

matchLabels:

app: tinypod-01

policyTypes:

- Ingress

ingress:

- from:

# to test vm

- ipBlock:

cidr: 192.168.77.71/32

EOF

oc apply -f ${BASE_DIR}/data/install/multi-network-policy-allow-some-cnv.yamltest

We conducted some tests based on existing rules to see if they align with our prefetch logic.

# on the cnv vm(192.168.77.71), can not ping the outside test vm

ping 192.169.77.10

# PING 192.169.77.10 (192.169.77.10) 56(84) bytes of data.

# ^C

# --- 192.169.77.10 ping statistics ---

# 3 packets transmitted, 0 received, 100% packet loss, time 2053ms

# on the outside test vm(192.168.77.10), can not ping the cnv vm

ping 192.168.77.71

# PING 192.168.77.71 (192.168.77.71) 56(84) bytes of data.

# ^C

# --- 192.168.77.71 ping statistics ---

# 52 packets transmitted, 0 received, 100% packet loss, time 52260ms

# on the cnv vm(192.168.77.71), can ping the gateway, and the test pod, and outside

ping 192.168.77.1

# PING 192.168.77.1 (192.168.77.1) 56(84) bytes of data.

# 64 bytes from 192.168.77.1: icmp_seq=1 ttl=64 time=1.22 ms

# 64 bytes from 192.168.77.1: icmp_seq=2 ttl=64 time=0.812 ms

# ....

ping 192.168.77.92

# PING 192.168.77.92 (192.168.77.92) 56(84) bytes of data.

# 64 bytes from 192.168.77.92: icmp_seq=1 ttl=64 time=1.32 ms

# 64 bytes from 192.168.77.92: icmp_seq=2 ttl=64 time=0.821 ms

# ....

ping 8.8.8.8

# PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data.

# 64 bytes from 8.8.8.8: icmp_seq=1 ttl=116 time=1.39 ms

# 64 bytes from 8.8.8.8: icmp_seq=2 ttl=116 time=1.11 ms

# ....

# on the cnv vm(192.168.77.71), can not ping the test vm(192.168.77.10), and another test pod

ping 192.168.77.10

# PING 192.168.77.10 (192.168.77.10) 56(84) bytes of data.

# ^C

# --- 192.168.77.10 ping statistics ---

# 3 packets transmitted, 0 received, 100% packet loss, time 2078ms

ping 192.168.77.93

# PING 192.168.77.93 (192.168.77.93) 56(84) bytes of data.

# ^C

# --- 192.168.77.93 ping statistics ---

# 4 packets transmitted, 0 received, 100% packet loss, time 3087ms

# on the test pod(192.168.77.92), can not ping the cnv vm(192.168.77.71)

oc exec -it tinypod-01 -- ping 192.168.77.71

# PING 192.168.77.71 (192.168.77.71) 56(84) bytes of data.

# ^C

# --- 192.168.77.71 ping statistics ---

# 2 packets transmitted, 0 received, 100% packet loss, time 1003ms

# on another cnv vm(192.168.77.72), can ping to gateway(192.168.77.1), but can not ping to cnv test vm(192.168.77.71)

ping 192.168.77.1

# PING 192.168.77.1 (192.168.77.1) 56(84) bytes of data.

# 64 bytes from 192.168.77.1: icmp_seq=1 ttl=64 time=0.756 ms

# 64 bytes from 192.168.77.1: icmp_seq=2 ttl=64 time=0.434 ms

ping 192.168.77.71

# PING 192.168.77.71 (192.168.77.71) 56(84) bytes of data.

# ^C

# --- 192.168.77.71 ping statistics ---

# 5 packets transmitted, 0 received, 100% packet loss, time 4109msnetwork observ

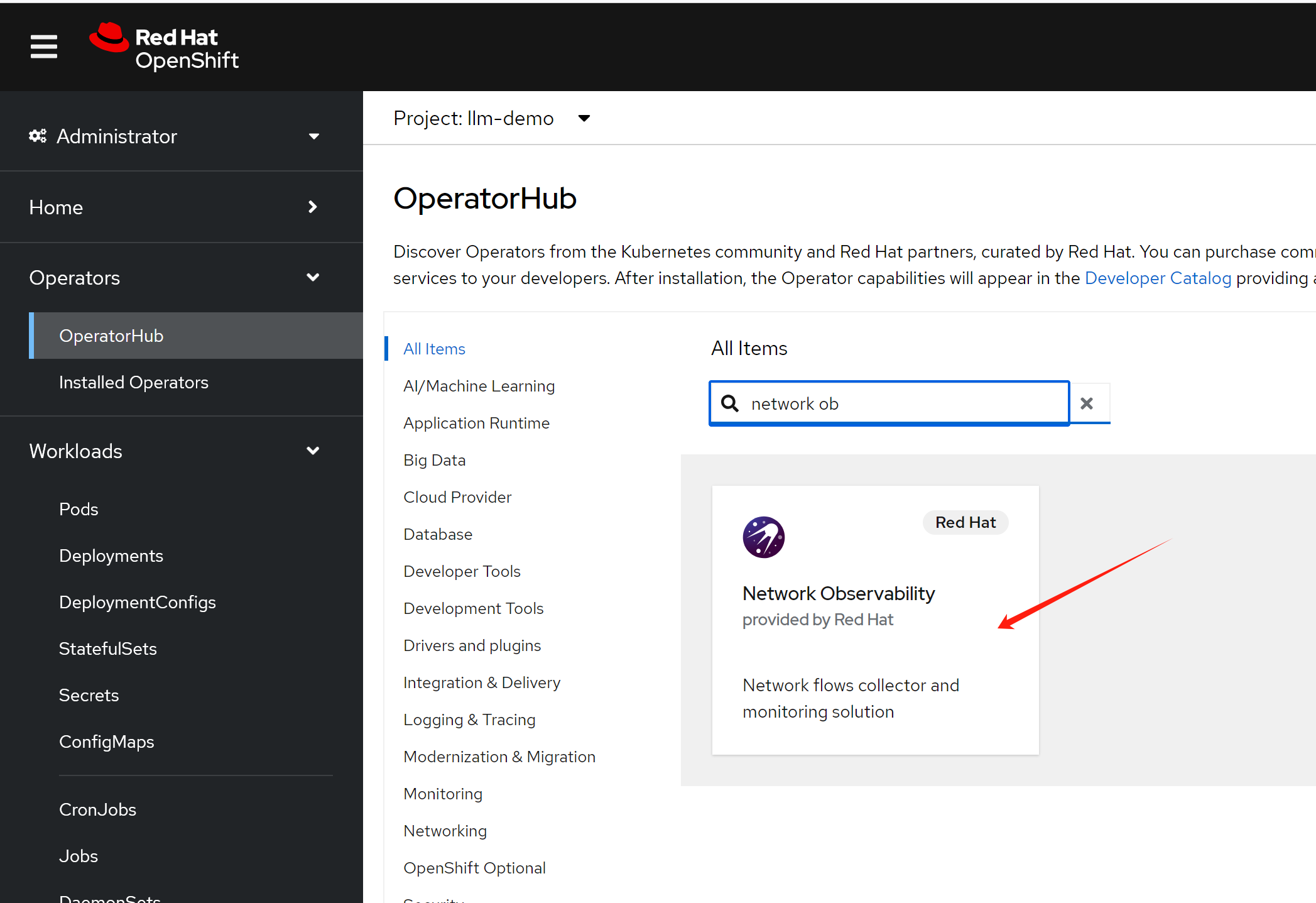

It was said, upon v1.6.1, network observ support 2nd ovn network.

tech in the background

Our tests show that ovn on the second network meets customer requirements. However, we are not satisfied with just the surface configuration. We want to understand the underlying principles, especially how the various components on the network level connect and communicate with each other.

for pod

Let’s first examine how various network components connect in a pod environment.

# let's see the interface, mac, and ip address in pod

oc exec -it tinypod -n llm-demo -- ip a

# 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

# link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

# inet 127.0.0.1/8 scope host lo

# valid_lft forever preferred_lft forever

# inet6 ::1/128 scope host

# valid_lft forever preferred_lft forever

# 2: eth0@if52: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default

# link/ether 0a:58:0a:84:00:35 brd ff:ff:ff:ff:ff:ff link-netnsid 0

# inet 10.132.0.53/23 brd 10.132.1.255 scope global eth0

# valid_lft forever preferred_lft forever

# inet6 fe80::858:aff:fe84:35/64 scope link

# valid_lft forever preferred_lft forever

# 3: net1@if56: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default

# link/ether 0a:58:c0:a8:4d:5b brd ff:ff:ff:ff:ff:ff link-netnsid 0

# inet 192.168.77.91/24 brd 192.168.77.255 scope global net1

# valid_lft forever preferred_lft forever

# inet6 fe80::858:c0ff:fea8:4d5b/64 scope link

# valid_lft forever preferred_lft forever

# on master-01 node

# let's check the nic interface information

ip a sho dev if56

# 56: a51a8137f92b2_3@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue master ovs-system state UP group default

# link/ether 7e:03:ea:89:f1:48 brd ff:ff:ff:ff:ff:ff link-netns 23fb7f53-6063-4954-9aa7-07c271699e72

# inet6 fe80::7c03:eaff:fe89:f148/64 scope link

# valid_lft forever preferred_lft forever

ip a show dev ovs-system

# 4: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

# link/ether 16:a7:be:91:ae:72 brd ff:ff:ff:ff:ff:ff

# there is no firewall rules on ocp node

nft list ruleset | grep 192.168.77

# nothing

############################################

# show something in the ovn internally

# get the ovn pod, so we can exec into it

VAR_POD=`oc get pod -n openshift-ovn-kubernetes -o wide | grep master-01-demo | grep ovnkube-node | awk '{print $1}'`

# get ovn information about pod default network

# we can see it is a port on switch

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl show | grep -i 0a:58:0a:84:00:35 -A 10 -B 10

# switch e22c4467-5d22-4fa2-83d7-d00d19c9684d (master-01-demo)

# port openshift-nmstate_nmstate-webhook-58fc66d999-h7jrb

# addresses: ["0a:58:0a:84:00:45 10.132.0.69"]

# port openshift-cnv_virt-handler-dtv6k

# addresses: ["0a:58:0a:84:00:e2 10.132.0.226"]

# ......

# port llm-demo_tinypod

# addresses: ["0a:58:0a:84:00:35 10.132.0.53"]

# ......

# get ov information about pod 2nd ovn network

# we can ss it is a port on another swtich

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl show | grep -i 0a:58:c0:a8:4d:5b -A 10 -B 10

# ....

# switch 5ba54a76-89fb-4610-95c9-b3262a3bb55c (localnet.cnv_ovn_localnet_switch)

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-green-ferret-41-ksmzt

# addresses: ["02:00:a3:00:00:02"]

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-gold-rabbit-80-vjfdm

# addresses: ["02:00:a3:00:00:01"]

# port localnet.cnv_ovn_localnet_port

# type: localnet

# addresses: ["unknown"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod

# addresses: ["0a:58:c0:a8:4d:5b 192.168.77.91"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-01

# addresses: ["0a:58:c0:a8:4d:5c 192.168.77.92"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-02

# addresses: ["0a:58:c0:a8:4d:5d 192.168.77.93"]

# ....

# get the overall switch and router topologies

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl show

# switch 7f294c4e-fce4-4c16-b830-17d7ca545078 (ext_master-01-demo)

# port br-ex_master-01-demo

# type: localnet

# addresses: ["unknown"]

# port etor-GR_master-01-demo

# type: router

# addresses: ["00:50:56:8e:b8:11"]

# router-port: rtoe-GR_master-01-demo

# switch 9b84fe16-da8c-4abb-b071-6c46c5191a68 (join)

# port jtor-GR_master-01-demo

# type: router

# router-port: rtoj-GR_master-01-demo

# port jtor-ovn_cluster_router

# type: router

# router-port: rtoj-ovn_cluster_router

# switch 5ba54a76-89fb-4610-95c9-b3262a3bb55c (localnet.cnv_ovn_localnet_switch)

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-green-ferret-41-ksmzt addresses: ["02:00:a3:00:00:02"]

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-gold-rabbit-80-vjfdm

# addresses: ["02:00:a3:00:00:01"]

# port localnet.cnv_ovn_localnet_port

# type: localnet

# addresses: ["unknown"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod

# addresses: ["0a:58:c0:a8:4d:5b 192.168.77.91"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-01

# addresses: ["0a:58:c0:a8:4d:5c 192.168.77.92"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-02

# addresses: ["0a:58:c0:a8:4d:5d 192.168.77.93"]

# switch e22c4467-5d22-4fa2-83d7-d00d19c9684d (master-01-demo)

# port openshift-nmstate_nmstate-webhook-58fc66d999-h7jrb

# addresses: ["0a:58:0a:84:00:45 10.132.0.69"]

# port openshift-cnv_virt-handler-dtv6k

# addresses: ["0a:58:0a:84:00:e2 10.132.0.226"]

# ......

# get the ovs config, and we can see the localnet mappings in the external-ids

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovs-vsctl list Open_vSwitch

# _uuid : 7b956824-5c57-4065-8a84-8718dfaf04b5

# bridges : [9f680a4d-1085-4421-95fe-f5933c4b39d4, a6a8ff10-58dc-4202-afed-4ee527cbabfd]

# cur_cfg : 2775

# datapath_types : [netdev, system]

# datapaths : {system=f78f4023-298f-44c4-ac91-a5c5eb3d1b31}

# db_version : "8.3.1"

# dpdk_initialized : false

# dpdk_version : "DPDK 22.11.4"

# external_ids : {hostname=master-01-demo, ovn-bridge-mappings="localnet-cnv:br-ex,physnet:br-ex", ovn-enable-lflow-cache="true", ovn-encap-ip="192.168.99.23", ovn-encap-type=geneve, ovn-is-interconn="true", ovn-memlimit-lflow-cache-kb="1048576", ovn-monitor-all="true", ovn-ofctrl-wait-before-clear="0", ovn-openflow-probe-interval="180", ovn-remote="unix:/var/run/ovn/ovnsb_db.sock", ovn-remote-probe-interval="180000", rundir="/var/run/openvswitch", system-id="17b1f051-7ec5-468a-8e1a-ffb8fa9e85bc"}

# iface_types : [bareudp, erspan, geneve, gre, gtpu, internal, ip6erspan, ip6gre, lisp, patch, stt, system, tap, vxlan]

# manager_options : []

# next_cfg : 2775

# other_config : {bundle-idle-timeout="180", ovn-chassis-idx-17b1f051-7ec5-468a-8e1a-ffb8fa9e85bc="", vlan-limit="0"}

# ovs_version : "3.1.5"

# ssl : []

# statistics : {}

# system_type : rhcos

# system_version : "4.15"

# get the ovs config, and we can see the localnet mappings in the external-ids

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovs-vsctl get Open_vSwitch . external-ids:ovn-bridge-mappings

# "localnet-cnv:br-ex,physnet:br-ex"

# from the ovn topology, we can see the localnet port is a type of localnet

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl show | grep -i localnet -A 10 -B 10

# switch 7f294c4e-fce4-4c16-b830-17d7ca545078 (ext_master-01-demo)

# port br-ex_master-01-demo

# type: localnet

# addresses: ["unknown"]

# port etor-GR_master-01-demo

# type: router

# addresses: ["00:50:56:8e:b8:11"]

# router-port: rtoe-GR_master-01-demo

# ......

# switch 5ba54a76-89fb-4610-95c9-b3262a3bb55c (localnet.cnv_ovn_localnet_switch)

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-green-ferret-41-ksmzt

# addresses: ["02:00:a3:00:00:02"]

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-gold-rabbit-80-vjfdm

# addresses: ["02:00:a3:00:00:01"]

# port localnet.cnv_ovn_localnet_port

# type: localnet

# addresses: ["unknown"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod

# addresses: ["0a:58:c0:a8:4d:5b 192.168.77.91"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-01

# addresses: ["0a:58:c0:a8:4d:5c 192.168.77.92"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-02

# addresses: ["0a:58:c0:a8:4d:5d 192.168.77.93"]

# from the ovs config, we can see it the localnet is patch interface

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovs-vsctl show | grep localnet -A 10 -B 240

# 7b956824-5c57-4065-8a84-8718dfaf04b5

# Bridge br-ex

# Port bond0

# Interface bond0

# type: system

# Port patch-localnet.cnv_ovn_localnet_port-to-br-int

# Interface patch-localnet.cnv_ovn_localnet_port-to-br-int

# type: patch

# options: {peer=patch-br-int-to-localnet.cnv_ovn_localnet_port}

# Port br-ex

# Interface br-ex

# type: internal

# Port patch-br-ex_master-01-demo-to-br-int

# Interface patch-br-ex_master-01-demo-to-br-int

# type: patch

# options: {peer=patch-br-int-to-br-ex_master-01-demo}

# Bridge br-int

# fail_mode: secure

# datapath_type: system

# Port "9fd1fa97d3b5e7b"

# Interface "9fd1fa97d3b5e7b"

# Port patch-br-int-to-br-ex_master-01-demo

# Interface patch-br-int-to-br-ex_master-01-demo

# type: patch

# options: {peer=patch-br-ex_master-01-demo-to-br-int}

# Port "58e1d0efb6c8c2e"

# Interface "58e1d0efb6c8c2e"

# Port "30195ca79f01bc4"

# Interface "30195ca79f01bc4"

# Port "10f1f38d0564fae"

# Interface "10f1f38d0564fae"

# Port "431208f15c76c56"

# Interface "431208f15c76c56"

# Port "057e84e928fce70"

# Interface "057e84e928fce70"

# Port "4e283efaf2d5646"

# Interface "4e283efaf2d5646"

# Port "8bc46d89de8a039"

# Interface "8bc46d89de8a039"

# Port bf55fdba2667ba8

# Interface bf55fdba2667ba8

# Port b6a2521573d6606

# Interface b6a2521573d6606

# Port patch-br-int-to-localnet.cnv_ovn_localnet_port

# Interface patch-br-int-to-localnet.cnv_ovn_localnet_port

# type: patch

# options: {peer=patch-localnet.cnv_ovn_localnet_port-to-br-int}

# Port "78534ac2a0363ac"

# Interface "78534ac2a0363ac"

# Port "6e9dd5224a95d0a"

# Interface "6e9dd5224a95d0a"

# Port ae6b4ac8a49d0e1

# Interface ae6b4ac8a49d0e1

# Port "57a625a7a04ae3c"

# Interface "57a625a7a04ae3c"

# Port "6862423e9a5a754"

# Interface "6862423e9a5a754"

# get the route list of the ovn

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl lr-list

# 3b0a3722-8d0a-4b47-a4fb-55123baa58f2 (GR_master-01-demo)

# 2b3e1161-6929-4ff3-a9d8-f96c8544dd8c (ovn_cluster_router)

# and check the route list of the router

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl lr-route-list GR_master-01-demo

# IPv4 Routes

# Route Table <main>:

# 169.254.169.0/29 169.254.169.4 dst-ip rtoe-GR_master-01-demo

# 10.132.0.0/14 100.64.0.1 dst-ip

# 0.0.0.0/0 192.168.99.1 dst-ip rtoe-GR_master-01-demo

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl lr-route-list ovn_cluster_router

# IPv4 Routes

# Route Table <main>:

# 100.64.0.2 100.64.0.2 dst-ip

# 10.132.0.0/14 100.64.0.2 src-ip

# check the routing policy

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl lr-policy-list GR_master-01-demo

# nothing

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl lr-policy-list ovn_cluster_router

# Routing Policies

# 1004 inport == "rtos-master-01-demo" && ip4.dst == 192.168.99.23 /* master-01-demo */ reroute 10.132.0.2

# 102 (ip4.src == $a4548040316634674295 || ip4.src == $a13607449821398607916) && ip4.dst == $a14918748166599097711 allow pkt_mark=1008

# 102 ip4.src == 10.132.0.0/14 && ip4.dst == 10.132.0.0/14 allow

# 102 ip4.src == 10.132.0.0/14 && ip4.dst == 100.64.0.0/16 allow

# get the various address set

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl list Address_Set

# .....

# _uuid : a50f42ff-6f11-423d-ae1a-e1c6dfd5784b

# addresses : []

# external_ids : {ip-family=v4, "k8s.ovn.org/id"="default-network-controller:Namespace:openshift-nutanix-infra:v4", "k8s.ovn.org/name"=openshift-nutanix-infra, "k8s.ovn.org/owner-controller"=default-network-controller, "k8s.ovn.org/owner-type"=Namespace}

# name : a10781256116209244644

# _uuid : d18dd13f-5220-4aa9-8b45-a4ace95a0d8a

# addresses : ["10.132.0.3", "10.132.0.37"]

# external_ids : {ip-family=v4, "k8s.ovn.org/id"="default-network-controller:Namespace:openshift-network-diagnostics:v4", "k8s.ovn.org/name"=openshift-network-diagnostics, "k8s.ovn.org/owner-controller"=default-network-controller, "k8s.ovn.org/owner-type"=Namespace}

# name : a1966919964212966539

# search the address set

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl find Address_Set name=a4548040316634674295

# _uuid : 98aec42a-8191-411a-8401-fd659d7d8f67

# addresses : []

# external_ids : {ip-family=v4, "k8s.ovn.org/id"="default-network-controller:EgressIP:egressip-served-pods:v4", "k8s.ovn.org/name"=egressip-served-pods, "k8s.ovn.org/owner-controller"=default-network-controller, "k8s.ovn.org/owner-type"=EgressIP}

# name : a4548040316634674295

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl find Address_Set name=a13607449821398607916

# _uuid : 710f6749-66c7-41d1-a9d3-bb00989e16a2

# addresses : []

# external_ids : {ip-family=v4, "k8s.ovn.org/id"="default-network-controller:EgressService:egresssvc-served-pods:v4", "k8s.ovn.org/name"=egresssvc-served-pods, "k8s.ovn.org/owner-controller"=default-network-controller, "k8s.ovn.org/owner-type"=EgressService}

# name : a13607449821398607916

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl find Address_Set name=a14918748166599097711

# _uuid : f2efc9fd-1b15-4ca3-ae72-1a2ff1c831e6

# addresses : ["192.168.99.23"]

# external_ids : {ip-family=v4, "k8s.ovn.org/id"="default-network-controller:EgressIP:node-ips:v4", "k8s.ovn.org/name"=node-ips, "k8s.ovn.org/owner-controller"=default-network-controller, "k8s.ovn.org/owner-type"=EgressIP}

# name : a14918748166599097711

# from ovn, get switch list

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl ls-list

# 7f294c4e-fce4-4c16-b830-17d7ca545078 (ext_master-01-demo)

# 9b84fe16-da8c-4abb-b071-6c46c5191a68 (join)

# 5ba54a76-89fb-4610-95c9-b3262a3bb55c (localnet.cnv_ovn_localnet_switch)

# e22c4467-5d22-4fa2-83d7-d00d19c9684d (master-01-demo)

# 317dad87-bf59-42ba-b3a3-e3c8b51b19a9 (transit_switch)

# try to get ACL from switch

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl acl-list ext_master-01-demo

# nothing

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl acl-list join

# nothing

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl acl-list localnet.cnv_ovn_localnet_switch

# nothing

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl acl-list master-01-demo

# to-lport 1001 (ip4.src==10.132.0.2) allow-related

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl acl-list transit_switch

# nothing

# finally, we find the acl from ACL table

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl list ACL | grep 192.168.77 -A 7 -B 7

# _uuid : e3fbdd41-3e7f-4a96-9491-b70b6f94d7de

# action : allow-related

# direction : to-lport

# external_ids : {direction=Ingress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-02:Ingress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-02", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.src == 192.168.77.1/32 && outport == @a2829002948383245342"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-02:Ingress:0"

# options : {}

# priority : 1001

# severity : []

# tier : 2

# _uuid : f1cc9a01-adc6-4664-b446-ba689f7128bc

# action : allow-related

# direction : from-lport

# external_ids : {direction=Egress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-02:Egress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-02", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.dst == 192.168.77.1/32 && inport == @a2829002948383245342"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-02:Egress:0"

# options : {apply-after-lb="true"}

# priority : 1001

# severity : []

# tier : 2

# _uuid : 65d51942-e031-4035-ab0a-183a00f1ca0d

# action : allow-related

# direction : to-lport

# external_ids : {direction=Ingress, gress-index="0", ip-block-index="1", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-01:Ingress:0:None:1", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-01", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.src == 192.168.77.92/32 && outport == @a2829001848871617131"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-01:Ingress:0"

# options : {}

# priority : 1001

# severity : []

# tier : 2

# --

# _uuid : 3ce03954-7d3c-4efd-a365-53afc84cb857

# action : allow-related

# direction : from-lport

# external_ids : {direction=Egress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-01:Egress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock-01", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.dst == 192.168.77.91/32 && inport == @a679546803547591159"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-01:Egress:0"

# options : {apply-after-lb="true"}

# priority : 1001

# severity : []

# tier : 2

# _uuid : 65498494-b740-4ff4-a354-02507b7bbdb3

# action : allow-related

# direction : to-lport

# external_ids : {direction=Ingress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-01:Ingress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-01", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.src == 192.168.77.1/32 && outport == @a2829001848871617131"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-01:Ingress:0"

# options : {}

# priority : 1001

# severity : []

# tier : 2

# --

# _uuid : cd5e1be5-f02e-4fa4-ab1d-a08f3ce243ea

# action : allow-related

# direction : from-lport

# external_ids : {direction=Egress, gress-index="0", ip-block-index="1", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-01:Egress:0:None:1", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-01", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.dst == 192.168.77.1/32 && inport == @a2829001848871617131"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-01:Egress:0"

# options : {apply-after-lb="true"}

# priority : 1001

# severity : []

# tier : 2

# --

# _uuid : 454571c7-94e3-44e7-8b22-bbeee9458388

# action : allow-related

# direction : to-lport

# external_ids : {direction=Ingress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock:Ingress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.src == 192.168.77.92/32 && outport == @a1333591772177041409"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock:Ingress:0"

# options : {}

# priority : 1001

# severity : []

# tier : 2

# _uuid : eadaecf9-221a-4709-a6c9-b9d3fe018f37

# action : allow-related

# direction : to-lport

# external_ids : {direction=Ingress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-03:Ingress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-03", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.src == 192.168.77.71/32 && outport == @a2829004047894873553"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-03:Ingress:0"

# options : {}

# priority : 1001

# severity : []

# tier : 2

# --

# _uuid : a351006c-9368-4613-aadb-1be678467ea2

# action : allow-related

# direction : from-lport

# external_ids : {direction=Egress, gress-index="0", ip-block-index="2", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-01:Egress:0:None:2", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-01", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.dst == 192.168.77.92/32 && inport == @a2829001848871617131"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-01:Egress:0"

# options : {apply-after-lb="true"}

# priority : 1001

# severity : []

# tier : 2

# --

# _uuid : 35d5775e-29d5-4a05-9b4b-5d75a5619954

# action : allow-related

# direction : from-lport

# external_ids : {direction=Egress, gress-index="0", ip-block-index="0", "k8s.ovn.org/id"="localnet-cnv-network-controller:NetworkPolicy:llm-demo:allow-ipblock-cnv-01:Egress:0:None:0", "k8s.ovn.org/name"="llm-demo:allow-ipblock-cnv-01", "k8s.ovn.org/owner-controller"=localnet-cnv-network-controller, "k8s.ovn.org/owner-type"=NetworkPolicy, port-policy-protocol=None}

# label : 0

# log : false

# match : "ip4.dst == 0.0.0.0/0 && ip4.dst != {192.168.77.0/24} && inport == @a2829001848871617131"

# meter : acl-logging

# name : "NP:llm-demo:allow-ipblock-cnv-01:Egress:0"

# options : {apply-after-lb="true"}

# priority : 1001

# severity : []

# tier : 2

# and we can get ACL from port group

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl find Port_Group name=a2829001848871617131

# _uuid : 49892b19-bb75-4119-be55-c675a6539e30

# acls : [35d5775e-29d5-4a05-9b4b-5d75a5619954, 65498494-b740-4ff4-a354-02507b7bbdb3, 65d51942-e031-4035-ab0a-183a00f1ca0d, a351006c-9368-4613-aadb-1be678467ea2, cd5e1be5-f02e-4fa4-ab1d-a08f3ce243ea]

# external_ids : {"k8s.ovn.org/network"=localnet-cnv, name=llm-demo_allow-ipblock-cnv-01}

# name : a2829001848871617131

# ports : [1596d9ca-b8dd-4a07-a96a-7c2334bc8a7d]

# Get the names of all port groups

# To extract the inport values from the matches and remove the @ symbol, you can use the following command:

PORT_GROUPS=$(oc exec ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl list ACL | grep '192.168.77' | sed -E 's/.*(inport|outport) == @([^"]*).*/\2/' | grep -v match | sort | uniq)

# List ACLs for each port group

for PG in $PORT_GROUPS

do

echo "==============================================="

echo "Info for port group $PG:"

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl find Port_Group name=$PG

echo

echo "Port info for port group $PG:"

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl --type=port-group list Ports | grep $PG

echo "ACLs for port group $PG:"

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl --type=port-group acl-list $PG

echo

done

# oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl find Logical_Switch_Port | grep caa9cd48-3689-4917-98fc-ccd1af0a2171 -A 10

# ===============================================

# Info for port group a1333591772177041409:

# _uuid : f7496e97-b49d-49d3-882c-96a1733aa6f2

# acls : [454571c7-94e3-44e7-8b22-bbeee9458388]

# external_ids : {"k8s.ovn.org/network"=localnet-cnv, name=llm-demo_allow-ipblock}

# name : a1333591772177041409

# ports : [caa9cd48-3689-4917-98fc-ccd1af0a2171]

# ACLs for port group a1333591772177041409:

# to-lport 1001 (ip4.src == 192.168.77.92/32 && outport == @a1333591772177041409) allow-related

# ===============================================

# Info for port group a2829001848871617131:

# _uuid : 49892b19-bb75-4119-be55-c675a6539e30

# acls : [35d5775e-29d5-4a05-9b4b-5d75a5619954, 65498494-b740-4ff4-a354-02507b7bbdb3, 65d51942-e031-4035-ab0a-183a00f1ca0d, a351006c-9368-4613-aadb-1be678467ea2, cd5e1be5-f02e-4fa4-ab1d-a08f3ce243ea]

# external_ids : {"k8s.ovn.org/network"=localnet-cnv, name=llm-demo_allow-ipblock-cnv-01}

# name : a2829001848871617131

# ports : [1596d9ca-b8dd-4a07-a96a-7c2334bc8a7d]

# ACLs for port group a2829001848871617131:

# from-lport 1001 (ip4.dst == 0.0.0.0/0 && ip4.dst != {192.168.77.0/24} && inport == @a2829001848871617131) allow-related [after-lb]

# from-lport 1001 (ip4.dst == 192.168.77.1/32 && inport == @a2829001848871617131) allow-related [after-lb]

# from-lport 1001 (ip4.dst == 192.168.77.92/32 && inport == @a2829001848871617131) allow-related [after-lb]

# to-lport 1001 (ip4.src == 192.168.77.1/32 && outport == @a2829001848871617131) allow-related

# to-lport 1001 (ip4.src == 192.168.77.92/32 && outport == @a2829001848871617131) allow-related

# ===============================================

# Info for port group a2829002948383245342:

# _uuid : 253e7411-5aea-4b87-9a7f-f49e4883182e

# acls : [e3fbdd41-3e7f-4a96-9491-b70b6f94d7de, f1cc9a01-adc6-4664-b446-ba689f7128bc]

# external_ids : {"k8s.ovn.org/network"=localnet-cnv, name=llm-demo_allow-ipblock-cnv-02}

# name : a2829002948383245342

# ports : [01dc6763-c512-4b5d-8b26-844c72817aee]

# ACLs for port group a2829002948383245342:

# from-lport 1001 (ip4.dst == 192.168.77.1/32 && inport == @a2829002948383245342) allow-related [after-lb]

# to-lport 1001 (ip4.src == 192.168.77.1/32 && outport == @a2829002948383245342) allow-related

# ===============================================

# Info for port group a2829004047894873553:

# _uuid : 801cb68b-ce04-4982-9e13-c534dfb35289

# acls : [eadaecf9-221a-4709-a6c9-b9d3fe018f37]

# external_ids : {"k8s.ovn.org/network"=localnet-cnv, name=llm-demo_allow-ipblock-cnv-03}

# name : a2829004047894873553

# ports : [f73a1106-48fe-4e94-9c04-61132268ca49]

# ACLs for port group a2829004047894873553:

# to-lport 1001 (ip4.src == 192.168.77.71/32 && outport == @a2829004047894873553) allow-related

# ===============================================

# Info for port group a679546803547591159:

# _uuid : d2a1ce0d-1440-452f-9e86-6c9731b1299e

# acls : [3ce03954-7d3c-4efd-a365-53afc84cb857]

# external_ids : {"k8s.ovn.org/network"=localnet-cnv, name=llm-demo_allow-ipblock-01}

# name : a679546803547591159

# ports : [f73a1106-48fe-4e94-9c04-61132268ca49]

# ACLs for port group a679546803547591159:

# from-lport 1001 (ip4.dst == 192.168.77.91/32 && inport == @a679546803547591159) allow-related [after-lb]

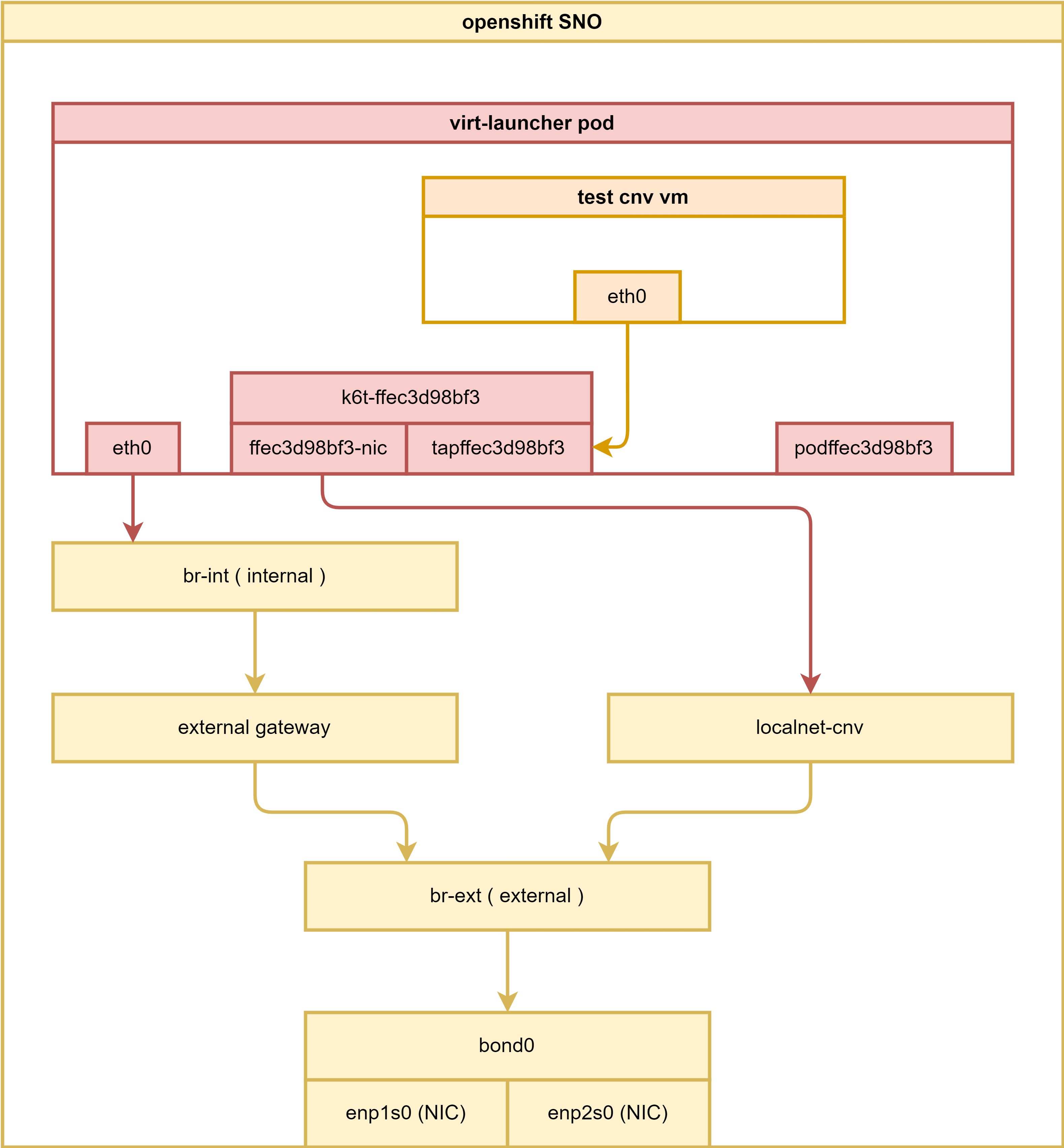

for cnv

Let’s take a look at how networks communicate in a CNV scenario.

oc get vmi

# NAME AGE PHASE IP NODENAME READY

# centos-stream9-gold-rabbit-80 50m Running 192.168.77.71 master-01-demo True

# centos-stream9-green-ferret-41 50m Running 192.168.77.72 master-01-demo True

oc get pod -n llm-demo

# NAME READY STATUS RESTARTS AGE

# tinypod 1/1 Running 3 3d21h

# tinypod-01 1/1 Running 3 3d21h

# tinypod-02 1/1 Running 3 3d21h

# virt-launcher-centos-stream9-gold-rabbit-80-vjfdm 1/1 Running 0 9h

# virt-launcher-centos-stream9-green-ferret-41-ksmzt 1/1 Running 0 9h

pod_name=`oc get pods -n llm-demo | grep 'centos-stream9-gold-rabbit-80' | awk '{print $1}' `

echo $pod_name

# virt-launcher-centos-stream9-gold-rabbit-80-mkm5c

oc exec -it $pod_name -n llm-demo -- ip a

# 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

# link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

# inet 127.0.0.1/8 scope host lo

# valid_lft forever preferred_lft forever

# inet6 ::1/128 scope host

# valid_lft forever preferred_lft forever

# 2: eth0@if147: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default

# link/ether 0a:58:0a:84:00:3c brd ff:ff:ff:ff:ff:ff link-netnsid 0

# inet 10.132.0.60/23 brd 10.132.1.255 scope global eth0

# valid_lft forever preferred_lft forever

# inet6 fe80::858:aff:fe84:3c/64 scope link

# valid_lft forever preferred_lft forever

# 3: ffec3d98bf3-nic@if148: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue master k6t-ffec3d98bf3 state UP group default

# link/ether aa:fc:47:b3:ba:cc brd ff:ff:ff:ff:ff:ff link-netnsid 0

# inet6 fe80::a8fc:47ff:feb3:bacc/64 scope link

# valid_lft forever preferred_lft forever

# 4: k6t-ffec3d98bf3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default qlen 1000

# link/ether 82:39:f2:cf:92:fe brd ff:ff:ff:ff:ff:ff

# inet6 fe80::a8fc:47ff:feb3:bacc/64 scope link

# valid_lft forever preferred_lft forever

# 5: tapffec3d98bf3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc fq_codel master k6t-ffec3d98bf3 state UP group default qlen 1000

# link/ether 82:39:f2:cf:92:fe brd ff:ff:ff:ff:ff:ff

# inet6 fe80::8039:f2ff:fecf:92fe/64 scope link

# valid_lft forever preferred_lft forever

# 6: podffec3d98bf3: <BROADCAST,NOARP> mtu 1400 qdisc noop state DOWN group default qlen 1000

# link/ether 02:00:a3:00:00:01 brd ff:ff:ff:ff:ff:ff

# inet6 fe80::a3ff:fe00:1/64 scope link

# valid_lft forever preferred_lft forever

oc exec -it $pod_name -n llm-demo -- ip r

# default via 10.132.0.1 dev eth0

# 10.132.0.0/23 dev eth0 proto kernel scope link src 10.132.0.60

# 10.132.0.0/14 via 10.132.0.1 dev eth0

# 100.64.0.0/16 via 10.132.0.1 dev eth0

# 172.22.0.0/16 via 10.132.0.1 dev eth0

oc exec -it $pod_name -n llm-demo -- ip tap

# tapffec3d98bf3: tap vnet_hdr persist user 107 group 107

oc exec -it $pod_name -n llm-demo -- ip a show type bridge

# 4: k6t-ffec3d98bf3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP group default qlen 1000

# link/ether 82:39:f2:cf:92:fe brd ff:ff:ff:ff:ff:ff

# inet6 fe80::a8fc:47ff:feb3:bacc/64 scope link

# valid_lft forever preferred_lft forever

oc exec -it $pod_name -n llm-demo -- ip a show type dummy

# 6: podffec3d98bf3: <BROADCAST,NOARP> mtu 1400 qdisc noop state DOWN group default qlen 1000

# link/ether 02:00:a3:00:00:01 brd ff:ff:ff:ff:ff:ff

# inet6 fe80::a3ff:fe00:1/64 scope link

# valid_lft forever preferred_lft forever

oc exec -it $pod_name -n llm-demo -- ip link show type bridge_slave

# 3: ffec3d98bf3-nic@if148: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue master k6t-ffec3d98bf3 state UP mode DEFAULT group default

# link/ether aa:fc:47:b3:ba:cc brd ff:ff:ff:ff:ff:ff link-netnsid 0

# 5: tapffec3d98bf3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc fq_codel master k6t-ffec3d98bf3 state UP mode DEFAULT group default qlen 1000

# link/ether 82:39:f2:cf:92:fe brd ff:ff:ff:ff:ff:ff

oc exec -it $pod_name -n llm-demo -- ip link show type veth

# 2: eth0@if147: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue state UP mode DEFAULT group default

# link/ether 0a:58:0a:84:00:3c brd ff:ff:ff:ff:ff:ff link-netnsid 0

# 3: ffec3d98bf3-nic@if148: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue master k6t-ffec3d98bf3 state UP mode DEFAULT group default

# link/ether aa:fc:47:b3:ba:cc brd ff:ff:ff:ff:ff:ff link-netnsid 0

oc exec -it $pod_name -n llm-demo -- tc qdisc show dev tapffec3d98bf3

# qdisc fq_codel 0: root refcnt 2 limit 10240p flows 1024 quantum 1414 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

oc exec -it $pod_name -n llm-demo -- tc qdisc show

# qdisc noqueue 0: dev lo root refcnt 2

# qdisc noqueue 0: dev eth0 root refcnt 2

# qdisc noqueue 0: dev ffec3d98bf3-nic root refcnt 2

# qdisc noqueue 0: dev k6t-ffec3d98bf3 root refcnt 2

# qdisc fq_codel 0: dev tapffec3d98bf3 root refcnt 2 limit 10240p flows 1024 quantum 1414 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

oc exec -it $pod_name -n llm-demo -- tc -s -p qdisc show dev tapffec3d98bf3 ingress

# nothing

# on master-01

ip a show dev if147

# 147: 13a96759b25594a@if2: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue master ovs-system state UP group default

# link/ether 56:0e:48:92:0c:0c brd ff:ff:ff:ff:ff:ff link-netns c9cfaa12-f497-4e17-81b2-d32310b46849

# inet6 fe80::540e:48ff:fe92:c0c/64 scope link

# valid_lft forever preferred_lft forever

ip a show dev if148

# 148: 13a96759b2559_3@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1400 qdisc noqueue master ovs-system state UP group default

# link/ether 42:98:1b:dc:4b:83 brd ff:ff:ff:ff:ff:ff link-netns c9cfaa12-f497-4e17-81b2-d32310b46849

# inet6 fe80::4098:1bff:fedc:4b83/64 scope link

# valid_lft forever preferred_lft forever

# get the ovn pod, so we can exec into it

VAR_POD=`oc get pod -n openshift-ovn-kubernetes -o wide | grep master-01-demo | grep ovnkube-node | awk '{print $1}'`

# get ovn information about pod default network

# we can see it is a port on switch

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl show | grep -i 02:00:a3:00:00:01 -A 10 -B 10

# ......

# switch 5ba54a76-89fb-4610-95c9-b3262a3bb55c (localnet.cnv_ovn_localnet_switch)

# port localnet.cnv_ovn_localnet_port

# type: localnet

# addresses: ["unknown"]

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-green-ferret-41-bwdqg

# addresses: ["02:00:a3:00:00:02"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-01

# addresses: ["0a:58:c0:a8:4d:5c 192.168.77.92"]

# port llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-gold-rabbit-80-mkm5c

# addresses: ["02:00:a3:00:00:01"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod-02

# addresses: ["0a:58:c0:a8:4d:5d 192.168.77.93"]

# port llm.demo.llm.demo.localnet.network_llm-demo_tinypod

# addresses: ["0a:58:c0:a8:4d:5b 192.168.77.91"]

# ......

oc exec -it ${VAR_POD} -c ovn-controller -n openshift-ovn-kubernetes -- ovn-nbctl list Logical_Switch_Port | grep -i 02:00:a3:00:00:01 -A 10 -B 10

# ......

# _uuid : 64247ac4-52b7-440a-aec8-320cc1d742dd

# addresses : ["02:00:a3:00:00:01"]

# dhcpv4_options : []

# dhcpv6_options : []

# dynamic_addresses : []

# enabled : []

# external_ids : {"k8s.ovn.org/nad"="llm-demo/llm-demo-localnet-network", "k8s.ovn.org/network"=localnet-cnv, "k8s.ovn.org/topology"=localnet, namespace=llm-demo, pod="true"}

# ha_chassis_group : []

# mirror_rules : []

# name : llm.demo.llm.demo.localnet.network_llm-demo_virt-launcher-centos-stream9-gold-rabbit-80-mkm5c

# options : {iface-id-ver="b263f64c-a1c9-4ac8-a403-bd9fe8a4fdda", requested-chassis=master-01-demo}

# parent_name : []

# port_security : ["02:00:a3:00:00:01"]

# tag : []

# tag_request : []

# type : ""

# up : true

# ......

oc exec -it $pod_name -n llm-demo -- tc -s -p qdisc

# qdisc noqueue 0: dev lo root refcnt 2

# Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

# backlog 0b 0p requeues 0

# qdisc noqueue 0: dev eth0 root refcnt 2

# Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

# backlog 0b 0p requeues 0

# qdisc noqueue 0: dev ffec3d98bf3-nic root refcnt 2

# Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

# backlog 0b 0p requeues 0

# qdisc noqueue 0: dev k6t-ffec3d98bf3 root refcnt 2

# Sent 0 bytes 0 pkt (dropped 0, overlimits 0 requeues 0)

# backlog 0b 0p requeues 0

# qdisc fq_codel 0: dev tapffec3d98bf3 root refcnt 2 limit 10240p flows 1024 quantum 1414 target 5ms interval 100ms memory_limit 32Mb ecn drop_batch 64

# Sent 100720 bytes 667 pkt (dropped 0, overlimits 0 requeues 0)

# backlog 0b 0p requeues 0

# maxpacket 70 drop_overlimit 0 new_flow_count 1 ecn_mark 0

# new_flows_len 0 old_flows_len 0

oc exec -it $pod_name -n llm-demo -- ps aufx ww

# USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND

# qemu 199 0.0 0.0 7148 3236 pts/0 Rs+ 06:57 0:00 ps aufx ww

# qemu 1 0.0 0.0 1323636 18612 ? Ssl 04:28 0:00 /usr/bin/virt-launcher-monitor --qemu-timeout 287s --name centos-stream9-gold-rabbit-80 --uid 38ea74b9-2537-461d-8fea-6332b2c5e527 --namespace llm-demo --kubevirt-share-dir /var/run/kubevirt --ephemeral-disk-dir /var/run/kubevirt-ephemeral-disks --container-disk-dir /var/run/kubevirt/container-disks --grace-period-seconds 195 --hook-sidecars 0 --ovmf-path /usr/share/OVMF --run-as-nonroot

# qemu 12 0.0 0.1 2556556 64284 ? Sl 04:28 0:03 /usr/bin/virt-launcher --qemu-timeout 287s --name centos-stream9-gold-rabbit-80 --uid 38ea74b9-2537-461d-8fea-6332b2c5e527 --namespace llm-demo --kubevirt-share-dir /var/run/kubevirt --ephemeral-disk-dir /var/run/kubevirt-ephemeral-disks --container-disk-dir /var/run/kubevirt/container-disks --grace-period-seconds 195 --hook-sidecars 0 --ovmf-path /usr/share/OVMF --run-as-nonroot

# qemu 26 0.0 0.0 1360584 28512 ? Sl 04:28 0:03 \_ /usr/sbin/virtqemud -f /var/run/libvirt/virtqemud.conf

# qemu 27 0.0 0.0 104612 14964 ? Sl 04:28 0:00 \_ /usr/sbin/virtlogd -f /etc/libvirt/virtlogd.conf

# qemu 77 0.8 1.4 3016972 812124 ? Sl 04:28 1:12 /usr/libexec/qemu-kvm -name guest=llm-demo_centos-stream9-gold-rabbit-80,debug-threads=on -S -object {"qom-type":"secret","id":"masterKey0","format":"raw","file":"/var/run/kubevirt-private/libvirt/qemu/lib/domain-1-llm-demo_centos-stre/master-key.aes"} -machine pc-q35-rhel9.2.0,usb=off,dump-guest-core=off,memory-backend=pc.ram -accel kvm -cpu Snowridge,ss=on,vmx=on,fma=on,pcid=on,avx=on,f16c=on,hypervisor=on,tsc-adjust=on,bmi1=on,avx2=on,bmi2=on,invpcid=on,avx512f=on,avx512dq=on,adx=on,avx512ifma=on,avx512cd=on,avx512bw=on,avx512vl=on,avx512vbmi=on,pku=on,avx512vbmi2=on,vaes=on,vpclmulqdq=on,avx512vnni=on,avx512bitalg=on,avx512-vpopcntdq=on,rdpid=on,fsrm=on,md-clear=on,stibp=on,xsaves=on,abm=on,ibpb=on,ibrs=on,amd-stibp=on,amd-ssbd=on,rdctl-no=on,ibrs-all=on,skip-l1dfl-vmentry=on,mds-no=on,pschange-mc-no=on,mpx=off,clwb=off,cldemote=off,movdiri=off,movdir64b=off,core-capability=off,split-lock-detect=off -m 2048 -object {"qom-type":"memory-backend-ram","id":"pc.ram","size":2147483648} -overcommit mem-lock=off -smp 1,sockets=1,dies=1,cores=1,threads=1 -object {"qom-type":"iothread","id":"iothread1"} -uuid 9d13aeff-0832-5cdb-bf31-19c338276374 -smbios type=1,manufacturer=Red Hat,product=OpenShift Virtualization,version=4.15.3,uuid=9d13aeff-0832-5cdb-bf31-19c338276374,family=Red Hat -no-user-config -nodefaults -chardev socket,id=charmonitor,fd=18,server=on,wait=off -mon chardev=charmonitor,id=monitor,mode=control -rtc base=utc -no-shutdown -boot strict=on -device {"driver":"pcie-root-port","port":16,"chassis":1,"id":"pci.1","bus":"pcie.0","multifunction":true,"addr":"0x2"} -device {"driver":"pcie-root-port","port":17,"chassis":2,"id":"pci.2","bus":"pcie.0","addr":"0x2.0x1"} -device {"driver":"pcie-root-port","port":18,"chassis":3,"id":"pci.3","bus":"pcie.0","addr":"0x2.0x2"} -device {"driver":"pcie-root-port","port":19,"chassis":4,"id":"pci.4","bus":"pcie.0","addr":"0x2.0x3"} -device {"driver":"pcie-root-port","port":20,"chassis":5,"id":"pci.5","bus":"pcie.0","addr":"0x2.0x4"} -device {"driver":"pcie-root-port","port":21,"chassis":6,"id":"pci.6","bus":"pcie.0","addr":"0x2.0x5"} -device {"driver":"pcie-root-port","port":22,"chassis":7,"id":"pci.7","bus":"pcie.0","addr":"0x2.0x6"} -device {"driver":"pcie-root-port","port":23,"chassis":8,"id":"pci.8","bus":"pcie.0","addr":"0x2.0x7"} -device {"driver":"pcie-root-port","port":24,"chassis":9,"id":"pci.9","bus":"pcie.0","multifunction":true,"addr":"0x3"} -device {"driver":"pcie-root-port","port":25,"chassis":10,"id":"pci.10","bus":"pcie.0","addr":"0x3.0x1"} -device {"driver":"pcie-root-port","port":26,"chassis":11,"id":"pci.11","bus":"pcie.0","addr":"0x3.0x2"} -device {"driver":"virtio-scsi-pci-non-transitional","id":"scsi0","bus":"pci.5","addr":"0x0"} -device {"driver":"virtio-serial-pci-non-transitional","id":"virtio-serial0","bus":"pci.6","addr":"0x0"} -blockdev {"driver":"host_device","filename":"/dev/rootdisk","aio":"native","node-name":"libvirt-2-storage","cache":{"direct":true,"no-flush":false},"auto-read-only":true,"discard":"unmap"} -blockdev {"node-name":"libvirt-2-format","read-only":false,"discard":"unmap","cache":{"direct":true,"no-flush":false},"driver":"raw","file":"libvirt-2-storage"} -device {"driver":"virtio-blk-pci-non-transitional","bus":"pci.7","addr":"0x0","drive":"libvirt-2-format","id":"ua-rootdisk","bootindex":1,"write-cache":"on","werror":"stop","rerror":"stop"} -blockdev {"driver":"file","filename":"/var/run/kubevirt-ephemeral-disks/cloud-init-data/llm-demo/centos-stream9-gold-rabbit-80/noCloud.iso","node-name":"libvirt-1-storage","cache":{"direct":true,"no-flush":false},"auto-read-only":true,"discard":"unmap"} -blockdev {"node-name":"libvirt-1-format","read-only":false,"discard":"unmap","cache":{"direct":true,"no-flush":false},"driver":"raw","file":"libvirt-1-storage"} -device {"driver":"virtio-blk-pci-non-transitional","bus":"pci.8","addr":"0x0","drive":"libvirt-1-format","id":"ua-cloudinitdisk","write-cache":"on","werror":"stop","rerror":"stop"} -netdev {"type":"tap","fd":"19","vhost":true,"vhostfd":"21","id":"hostua-nic-yellow-duck-37"} -device {"driver":"virtio-net-pci-non-transitional","host_mtu":1400,"netdev":"hostua-nic-yellow-duck-37","id":"ua-nic-yellow-duck-37","mac":"02:00:a3:00:00:01","bus":"pci.1","addr":"0x0","romfile":""} -chardev socket,id=charserial0,fd=16,server=on,wait=off -device {"driver":"isa-serial","chardev":"charserial0","id":"serial0","index":0} -chardev socket,id=charchannel0,fd=17,server=on,wait=off -device {"driver":"virtserialport","bus":"virtio-serial0.0","nr":1,"chardev":"charchannel0","id":"channel0","name":"org.qemu.guest_agent.0"} -audiodev {"id":"audio1","driver":"none"} -vnc vnc=unix:/var/run/kubevirt-private/38ea74b9-2537-461d-8fea-6332b2c5e527/virt-vnc,audiodev=audio1 -device {"driver":"VGA","id":"video0","vgamem_mb":16,"bus":"pcie.0","addr":"0x1"} -device {"driver":"virtio-balloon-pci-non-transitional","id":"balloon0","free-page-reporting":true,"bus":"pci.9","addr":"0x0"} -object {"qom-type":"rng-random","id":"objrng0","filename":"/dev/urandom"} -device {"driver":"virtio-rng-pci-non-transitional","rng":"objrng0","id":"rng0","bus":"pci.10","addr":"0x0"} -sandbox on,obsolete=deny,elevateprivileges=deny,spawn=deny,resourcecontrol=deny -msg timestamp=on

# https://www.redhat.com/en/blog/hands-vhost-net-do-or-do-not-there-no-try

oc exec -it $pod_name -n llm-demo -- virsh list --all

# Authorization not available. Check if polkit service is running or see debug message for more information.

# Id Name State

# --------------------------------------------------------

# 1 llm-demo_centos-stream9-gold-rabbit-80 running

oc exec -it $pod_name -n llm-demo -- virsh dumpxml llm-demo_centos-stream9-gold-rabbit-80

# Authorization not available. Check if polkit service is running or see debug message for more information.

# <domain type='kvm' id='1'>

# <name>llm-demo_centos-stream9-gold-rabbit-80</name>

# <uuid>9d13aeff-0832-5cdb-bf31-19c338276374</uuid>

# <metadata>

# <kubevirt xmlns="http://kubevirt.io">

# <uid/>

# </kubevirt>

# </metadata>

# <memory unit='KiB'>2097152</memory>

# <currentMemory unit='KiB'>2097152</currentMemory>

# <vcpu placement='static'>1</vcpu>

# <iothreads>1</iothreads>

# <sysinfo type='smbios'>

# <system>

# <entry name='manufacturer'>Red Hat</entry>

# <entry name='product'>OpenShift Virtualization</entry>

# <entry name='version'>4.15.3</entry>

# <entry name='uuid'>9d13aeff-0832-5cdb-bf31-19c338276374</entry>

# <entry name='family'>Red Hat</entry>

# </system>

# </sysinfo>

# <os>

# <type arch='x86_64' machine='pc-q35-rhel9.2.0'>hvm</type>

# <boot dev='hd'/>

# <smbios mode='sysinfo'/>

# </os>

# <features>

# <acpi/>

# </features>

# <cpu mode='custom' match='exact' check='full'>

# <model fallback='forbid'>Snowridge</model>

# <vendor>Intel</vendor>

# <topology sockets='1' dies='1' cores='1' threads='1'/>

# <feature policy='require' name='ss'/>

# <feature policy='require' name='vmx'/>

# <feature policy='require' name='fma'/>

# <feature policy='require' name='pcid'/>

# <feature policy='require' name='avx'/>

# <feature policy='require' name='f16c'/>

# <feature policy='require' name='hypervisor'/>

# <feature policy='require' name='tsc_adjust'/>

# <feature policy='require' name='bmi1'/>

# <feature policy='require' name='avx2'/>

# <feature policy='require' name='bmi2'/>

# <feature policy='require' name='invpcid'/>

# <feature policy='require' name='avx512f'/>

# <feature policy='require' name='avx512dq'/>

# <feature policy='require' name='adx'/>

# <feature policy='require' name='avx512ifma'/>

# <feature policy='require' name='avx512cd'/>

# <feature policy='require' name='avx512bw'/>

# <feature policy='require' name='avx512vl'/>

# <feature policy='require' name='avx512vbmi'/>

# <feature policy='require' name='pku'/>

# <feature policy='require' name='avx512vbmi2'/>

# <feature policy='require' name='vaes'/>

# <feature policy='require' name='vpclmulqdq'/>

# <feature policy='require' name='avx512vnni'/>

# <feature policy='require' name='avx512bitalg'/>

# <feature policy='require' name='avx512-vpopcntdq'/>